Probabilistic Riemannian submanifold learning with wrapped Gaussian process latent variable models

Paper and Code

May 23, 2018

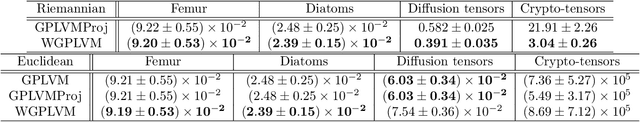

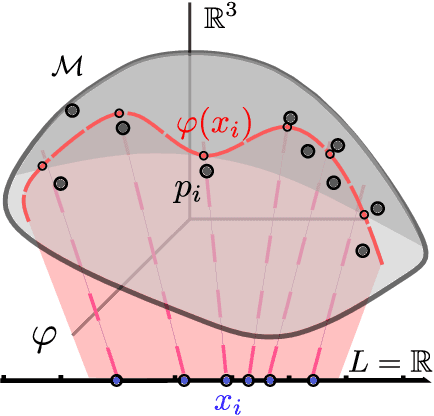

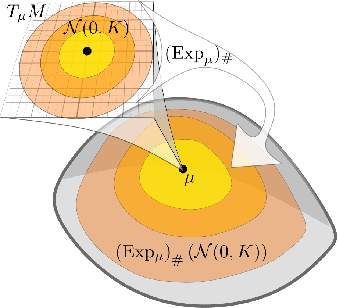

Latent variable models learn a stochastic embedding from a low-dimensional latent space onto a submanifold of the Euclidean input space, on which the data is assumed to lie. Frequently, however, the data objects are known to satisfy constraints or invariances, which are not enforced by traditional latent variable models. As a result, significant probability mass is assigned to points that violate the known constraints. To remedy this, we propose the wrapped Gaussian process latent variable model (WGPLVM). The model allows non-linear, probabilistic inference of a lower-dimensional submanifold where data is assumed to reside, while respecting known constraints or invariances encoded in a Riemannian manifold. We evaluate our model against the Euclidean GPLVM on several datasets and tasks, including encoding, visualization and uncertainty quantification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge