Privacy Analysis of Online Learning Algorithms via Contraction Coefficients

Paper and Code

Dec 20, 2020

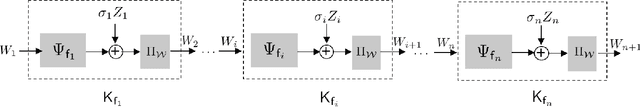

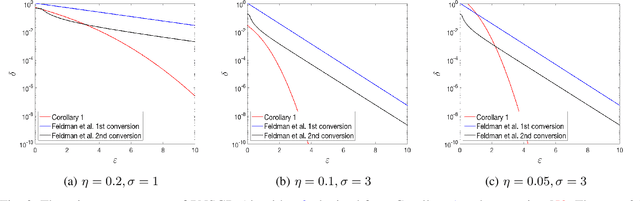

We propose an information-theoretic technique for analyzing privacy guarantees of online algorithms. Specifically, we demonstrate that differential privacy guarantees of iterative algorithms can be determined by a direct application of contraction coefficients derived from strong data processing inequalities for $f$-divergences. Our technique relies on generalizing the Dobrushin's contraction coefficient for total variation distance to an $f$-divergence known as $E_\gamma$-divergence. $E_\gamma$-divergence, in turn, is equivalent to approximate differential privacy. As an example, we apply our technique to derive the differential privacy parameters of gradient descent. Moreover, we also show that this framework can be tailored to batch learning algorithms that can be implemented with one pass over the training dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge