Predicting Flight Delay with Spatio-Temporal Trajectory Convolutional Network and Airport Situational Awareness Map

Paper and Code

May 19, 2021

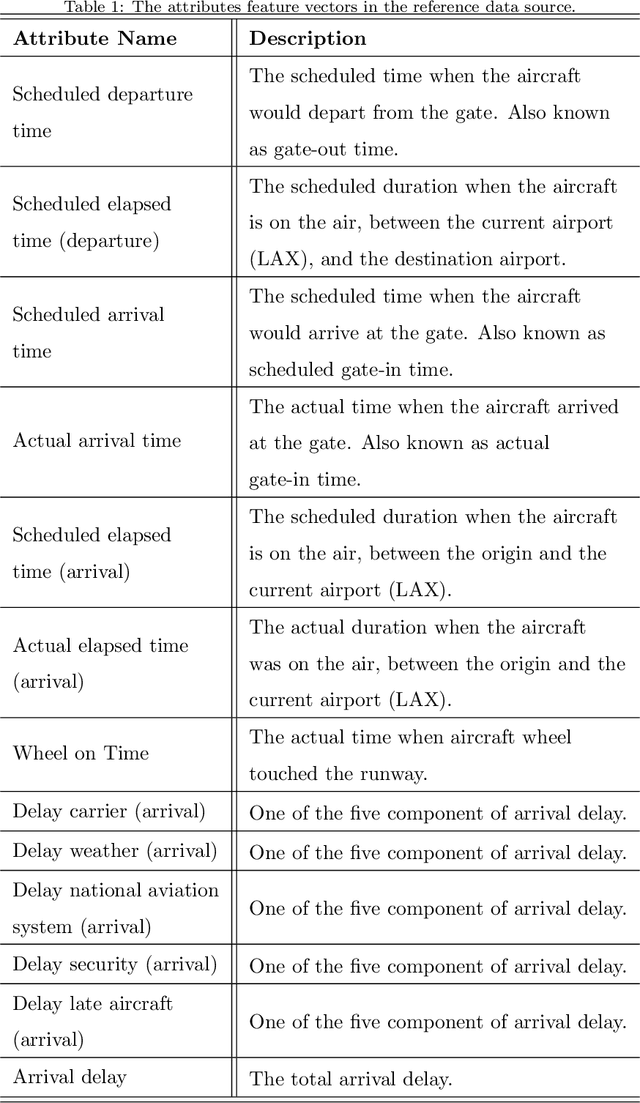

To model and forecast flight delays accurately, it is crucial to harness various vehicle trajectory and contextual sensor data on airport tarmac areas. These heterogeneous sensor data, if modelled correctly, can be used to generate a situational awareness map. Existing techniques apply traditional supervised learning methods onto historical data, contextual features and route information among different airports to predict flight delay are inaccurate and only predict arrival delay but not departure delay, which is essential to airlines. In this paper, we propose a vision-based solution to achieve a high forecasting accuracy, applicable to the airport. Our solution leverages a snapshot of the airport situational awareness map, which contains various trajectories of aircraft and contextual features such as weather and airline schedules. We propose an end-to-end deep learning architecture, TrajCNN, which captures both the spatial and temporal information from the situational awareness map. Additionally, we reveal that the situational awareness map of the airport has a vital impact on estimating flight departure delay. Our proposed framework obtained a good result (around 18 minutes error) for predicting flight departure delay at Los Angeles International Airport.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge