Practical Vertical Federated Learning with Unsupervised Representation Learning

Paper and Code

Aug 13, 2022

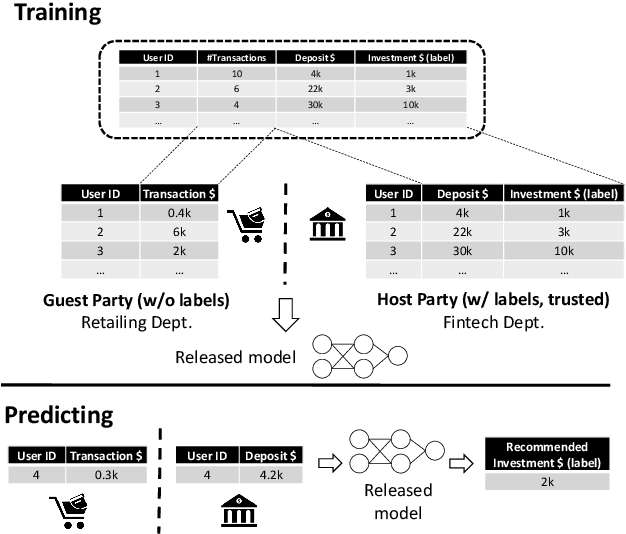

As societal concerns on data privacy recently increase, we have witnessed data silos among multiple parties in various applications. Federated learning emerges as a new learning paradigm that enables multiple parties to collaboratively train a machine learning model without sharing their raw data. Vertical federated learning, where each party owns different features of the same set of samples and only a single party has the label, is an important and challenging topic in federated learning. Communication costs among different parties have been a major hurdle for practical vertical learning systems. In this paper, we propose a novel communication-efficient vertical federated learning algorithm named FedOnce, which requires only one-shot communication among parties. To improve model accuracy and provide privacy guarantee, FedOnce features unsupervised learning representations in the federated setting and privacy-preserving techniques based on moments accountant. The comprehensive experiments on 10 datasets demonstrate that FedOnce achieves close performance compared to state-of-the-art vertical federated learning algorithms with much lower communication costs. Meanwhile, our privacy-preserving technique significantly outperforms the state-of-the-art approaches under the same privacy budget.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge