Post-synaptic potential regularization has potential

Paper and Code

Jul 19, 2019

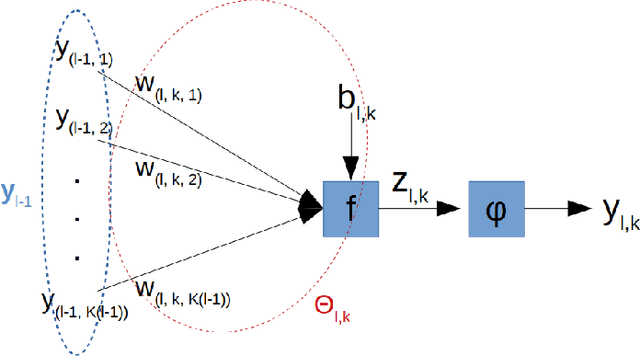

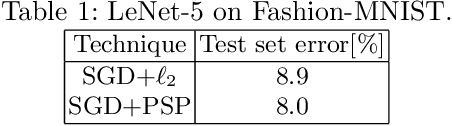

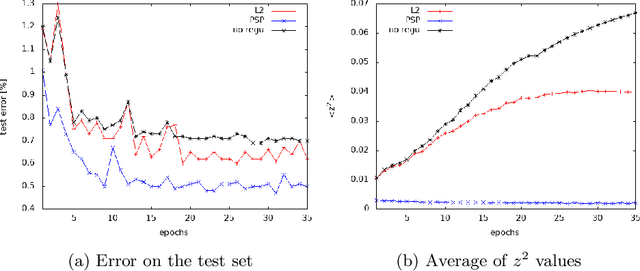

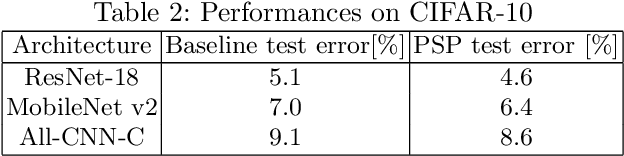

Improving generalization is one of the main challenges for training deep neural networks on classification tasks. In particular, a number of techniques have been proposed, aiming to boost the performance on unseen data: from standard data augmentation techniques to the $\ell_2$ regularization, dropout, batch normalization, entropy-driven SGD and many more.\\ In this work we propose an elegant, simple and principled approach: post-synaptic potential regularization (PSP). We tested this regularization on a number of different state-of-the-art scenarios. Empirical results show that PSP achieves a classification error comparable to more sophisticated learning strategies in the MNIST scenario, while improves the generalization compared to $\ell_2$ regularization in deep architectures trained on CIFAR-10.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge