Performance Weighting for Robust Federated Learning Against Corrupted Sources

Paper and Code

May 02, 2022

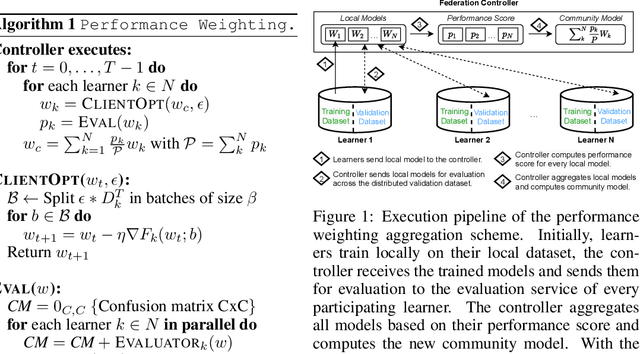

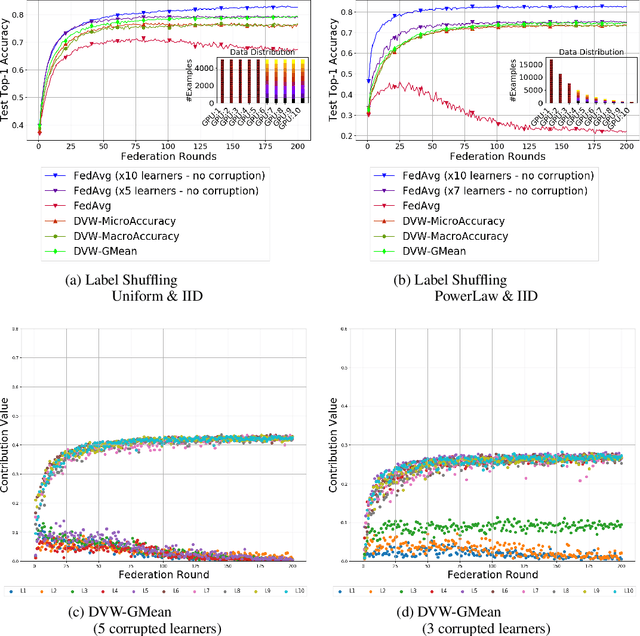

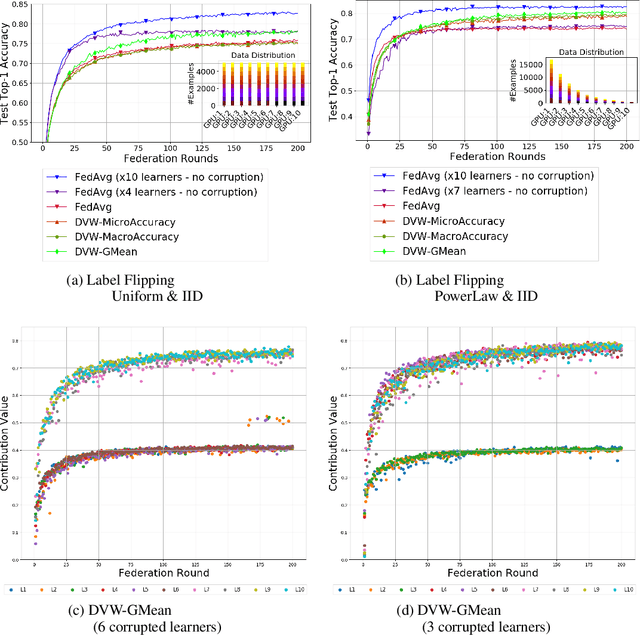

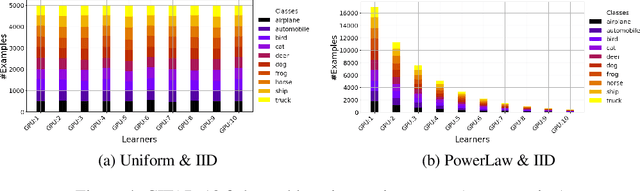

Federated Learning has emerged as a dominant computational paradigm for distributed machine learning. Its unique data privacy properties allow us to collaboratively train models while offering participating clients certain privacy-preserving guarantees. However, in real-world applications, a federated environment may consist of a mixture of benevolent and malicious clients, with the latter aiming to corrupt and degrade federated model's performance. Different corruption schemes may be applied such as model poisoning and data corruption. Here, we focus on the latter, the susceptibility of federated learning to various data corruption attacks. We show that the standard global aggregation scheme of local weights is inefficient in the presence of corrupted clients. To mitigate this problem, we propose a class of task-oriented performance-based methods computed over a distributed validation dataset with the goal to detect and mitigate corrupted clients. Specifically, we construct a robust weight aggregation scheme based on geometric mean and demonstrate its effectiveness under random label shuffling and targeted label flipping attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge