Out-of-Distribution Detection in Multi-Label Datasets using Latent Space of $β$-VAE

Paper and Code

Mar 10, 2020

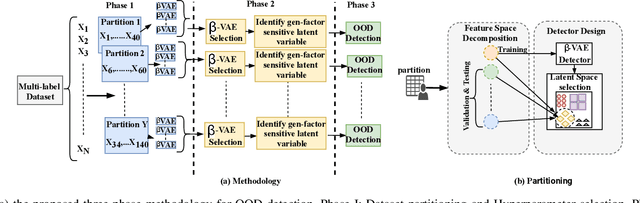

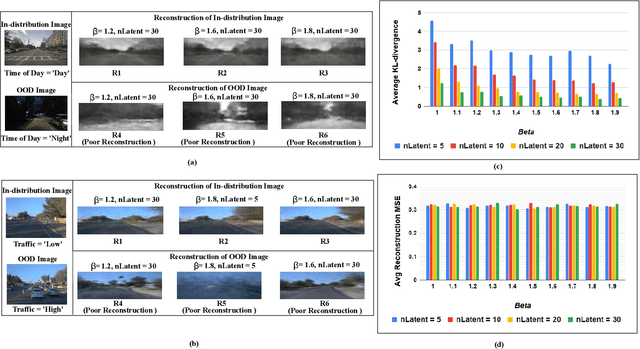

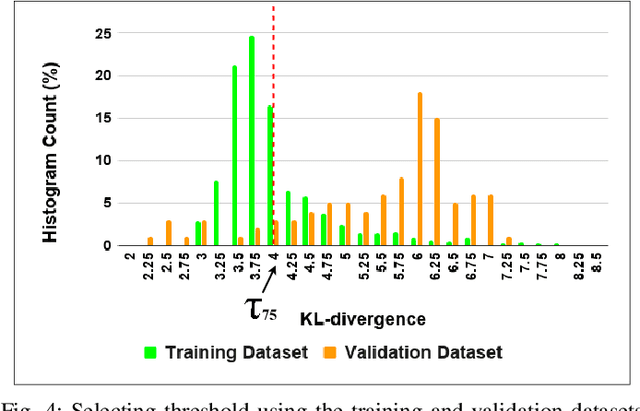

Learning Enabled Components (LECs) are widely being used in a variety of perception based autonomy tasks like image segmentation, object detection, end-to-end driving, etc. These components are trained with large image datasets with multimodal factors like weather conditions, time-of-day, traffic-density, etc. The LECs learn from these factors during training, and while testing if there is variation in any of these factors, the components get confused resulting in low confidence predictions. The images with factors not seen during training is commonly referred to as Out-of-Distribution (OOD). For safe autonomy it is important to identify the OOD images, so that a suitable mitigation strategy can be performed. Classical one-class classifiers like SVM and SVDD are used to perform OOD detection. However, the multiple labels attached to the images in these datasets, restricts the direct application of these techniques. We address this problem using the latent space of the $\beta$-Variational Autoencoder ($\beta$-VAE). We use the fact that compact latent space generated by an appropriately selected $\beta$-VAE will encode the information about these factors in a few latent variables, and that can be used for computationally inexpensive detection. We evaluate our approach on the nuScenes dataset, and our results shows the latent space of $\beta$-VAE is sensitive to encode changes in the values of the generative factor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge