ORSA: Outlier Robust Stacked Aggregation for Best- and Worst-Case Approximations of Ensemble Systems\

Paper and Code

Nov 17, 2021

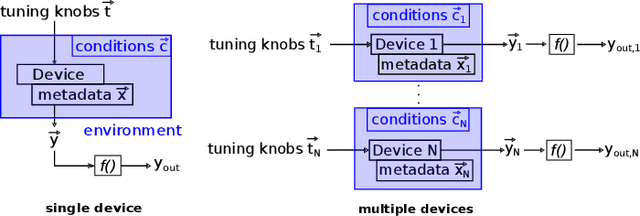

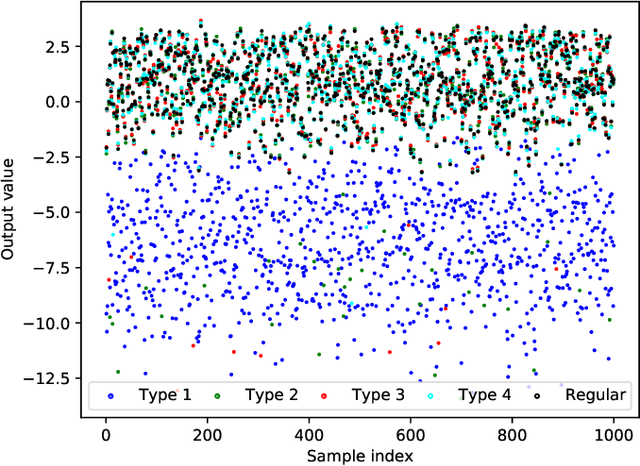

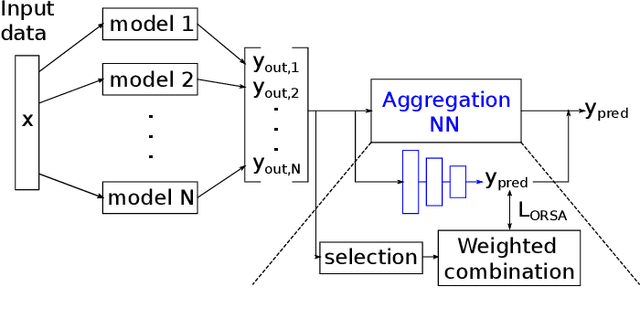

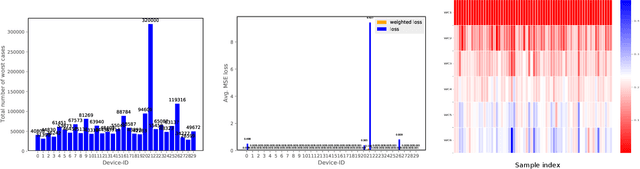

In recent years, the usage of ensemble learning in applications has grown significantly due to increasing computational power allowing the training of large ensembles in reasonable time frames. Many applications, e.g., malware detection, face recognition, or financial decision-making, use a finite set of learning algorithms and do aggregate them in a way that a better predictive performance is obtained than any other of the individual learning algorithms. In the field of Post-Silicon Validation for semiconductor devices (PSV), data sets are typically provided that consist of various devices like, e.g., chips of different manufacturing lines. In PSV, the task is to approximate the underlying function of the data with multiple learning algorithms, each trained on a device-specific subset, instead of improving the performance of arbitrary classifiers on the entire data set. Furthermore, the expectation is that an unknown number of subsets describe functions showing very different characteristics. Corresponding ensemble members, which are called outliers, can heavily influence the approximation. Our method aims to find a suitable approximation that is robust to outliers and represents the best or worst case in a way that will apply to as many types as possible. A 'soft-max' or 'soft-min' function is used in place of a maximum or minimum operator. A Neural Network (NN) is trained to learn this 'soft-function' in a two-stage process. First, we select a subset of ensemble members that is representative of the best or worst case. Second, we combine these members and define a weighting that uses the properties of the Local Outlier Factor (LOF) to increase the influence of non-outliers and to decrease outliers. The weighting ensures robustness to outliers and makes sure that approximations are suitable for most types.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge