Order-Optimal Projection-Free Algorithm for Adversarially Constrained Online Convex Optimization

Paper and Code

Feb 23, 2025

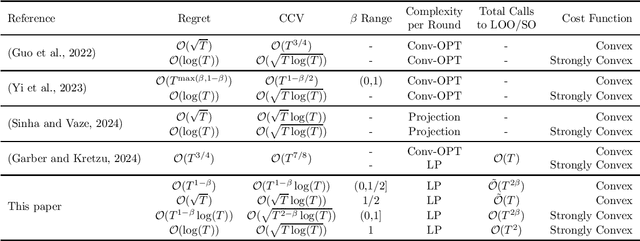

Projection-based algorithms for constrained Online Convex Optimization (COCO) face scalability challenges in high-dimensional settings due to the computational complexity of projecting iterates onto constraint sets. This paper introduces a projection-free algorithm for COCO that achieves state-of-the-art performance guarantees while eliminating the need for projections. By integrating a separation oracle with adaptive Online Gradient Descent (OGD) and employing a Lyapunov-driven surrogate function, while dynamically adjusting step sizes using gradient norms, our method jointly optimizes the regret and cumulative constraint violation (CCV). We also use a blocked version of OGD that helps achieve tradeoffs betweeen the regret and CCV with the number of calls to the separation oracle. For convex cost functions, our algorithm attains an optimal regret of $\mathcal{O}(\sqrt{T})$ and a CCV of $\mathcal{O}(\sqrt{T} \log T)$, matching the best-known projection-based results, while only using $\tilde{\mathcal{O}}({T})$ calls to the separation oracle. The results also demonstrate a tradeoff where lower calls to the separation oracle increase the regret and the CCV. In the strongly convex setting, we further achieve a regret of $\mathcal{O}(\log T)$ and a CCV of $\mathcal{O}(\sqrt{T\log T} )$, while requiring ${\mathcal{O}}({T}^2)$ calls to the separation oracle. Further, tradeoff with the decreasing oracle calls is studied. These results close the gap between projection-free and projection-based approaches, demonstrating that projection-free methods can achieve performance comparable to projection-based counterparts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge