Order Optimal One-Shot Distributed Learning

Paper and Code

Nov 02, 2019

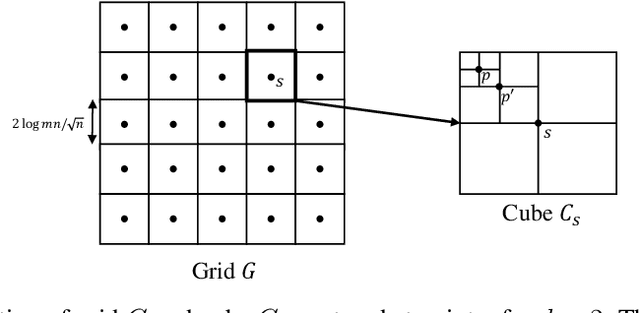

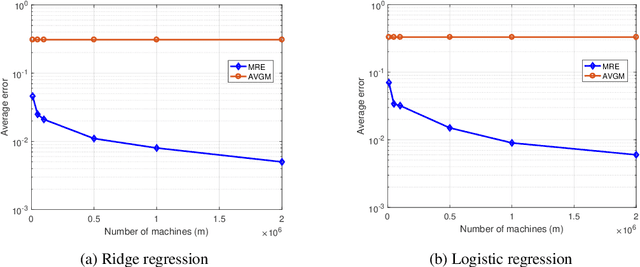

We consider distributed statistical optimization in one-shot setting, where there are $m$ machines each observing $n$ i.i.d. samples. Based on its observed samples, each machine then sends an $O(\log(mn))$-length message to a server, at which a parameter minimizing an expected loss is to be estimated. We propose an algorithm called Multi-Resolution Estimator (MRE) whose expected error is no larger than $\tilde{O}\big(m^{-{1}/{\max(d,2)}} n^{-1/2}\big)$, where $d$ is the dimension of the parameter space. This error bound meets existing lower bounds up to poly-logarithmic factors, and is thereby order optimal. The expected error of MRE, unlike existing algorithms, tends to zero as the number of machines ($m$) goes to infinity, even when the number of samples per machine ($n$) remains upper bounded by a constant. This property of the MRE algorithm makes it applicable in new machine learning paradigms where $m$ is much larger than $n$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge