Optimal DR-Submodular Maximization and Applications to Provable Mean Field Inference

Paper and Code

May 19, 2018

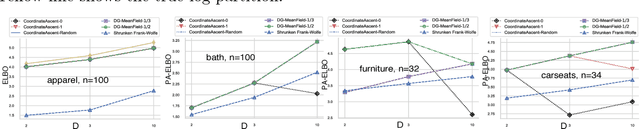

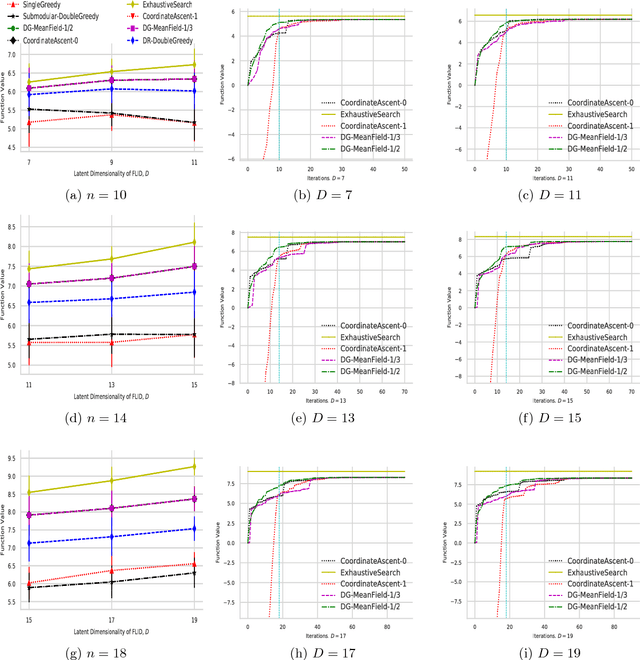

Mean field inference in probabilistic models is generally a highly nonconvex problem. Existing optimization methods, e.g., coordinate ascent algorithms, can only generate local optima. In this work we propose provable mean filed methods for probabilistic log-submodular models and its posterior agreement (PA) with strong approximation guarantees. The main algorithmic technique is a new Double Greedy scheme, termed DR-DoubleGreedy, for continuous DR-submodular maximization with box-constraints. It is a one-pass algorithm with linear time complexity, reaching the optimal 1/2 approximation ratio, which may be of independent interest. We validate the superior performance of our algorithms against baseline algorithms on both synthetic and real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge