Online Convex Dictionary Learning

Paper and Code

Apr 04, 2019

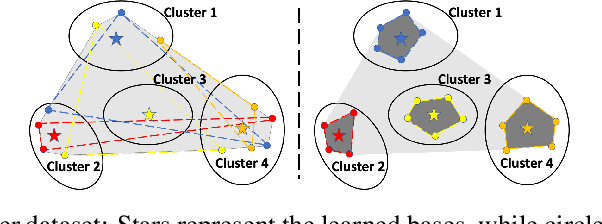

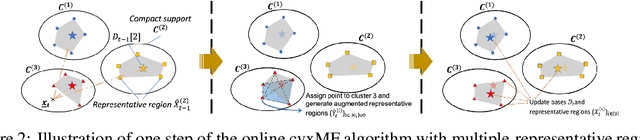

Dictionary learning is a dimensionality reduction technique widely used in data mining, machine learning and signal processing alike. Nevertheless, many dictionary learning algorithms such as variants of Matrix Factorization (MF) do not adequately scale with the size of available datasets. Furthermore, scalable dictionary learning methods lack interpretability of the derived dictionary matrix. To mitigate these two issues, we propose a novel low-complexity, batch online convex dictionary learning algorithm. The algorithm sequentially processes small batches of data maintained in a fixed amount of storage space, and produces meaningful dictionaries that satisfy convexity constraints. Our analytical results are two-fold. First, we establish convergence guarantees for the proposed online learning scheme. Second, we show that a subsequence of the generated dictionaries converges to a stationary point of the approximation-error function. Experimental results on synthetic and real world datasets demonstrate both the computational savings of the proposed online method with respect to convex non-negative MF, and performance guarantees comparable to those of online non-convex learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge