One More Check: Making "Fake Background" Be Tracked Again

Paper and Code

Apr 19, 2021

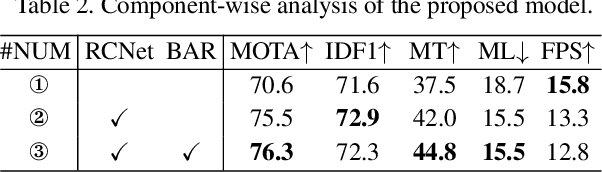

The one-shot multi-object tracking, which integrates object detection and ID embedding extraction into a unified network, has achieved groundbreaking results in recent years. However, current one-shot trackers solely rely on single-frame detections to predict candidate bounding boxes, which may be unreliable when facing disastrous visual degradation, e.g., motion blur, occlusions. Once a target bounding box is mistakenly classified as background by the detector, the temporal consistency of its corresponding tracklet will be no longer maintained, as shown in Fig. 1. In this paper, we set out to restore the misclassified bounding boxes, i.e., fake background, by proposing a re-check network. The re-check network propagates previous tracklets to the current frame by exploring the relation between cross-frame temporal cues and current candidates using the modified cross-correlation layer. The propagation results help to reload the "fake background" and eventually repair the broken tracklets. By inserting the re-check network to a strong baseline tracker CSTrack (a variant of JDE), our model achieves favorable gains by $70.7 \rightarrow 76.7$, $70.6 \rightarrow 76.3$ MOTA on MOT16 and MOT17, respectively. Code is publicly available at https://github.com/JudasDie/SOTS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge