On the choice of graph neural network architectures

Paper and Code

Nov 13, 2019

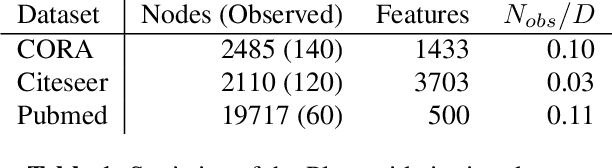

Seminal works on graph neural networks have primarily targeted semi-supervised node classification problems with few observed labels and high-dimensional signals. With the development of graph networks, this setup has become a de facto benchmark for a significant body of research. Interestingly, several works have recently shown that graph neural networks do not perform much better than predefined low-pass filters followed by a linear classifier in these particular settings. However, when learning with little data in a high-dimensional space, it is not surprising that simple and heavily regularized learning methods are near-optimal. In this paper, we show empirically that in settings with fewer features and more training data, more complex graph networks significantly outperform simpler architectures, and propose a few insights towards to the proper choice of graph neural networks architectures. We finally outline the importance of using sufficiently diverse benchmarks (including lower dimensional signals as well) when designing and studying new types of graph neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge