On the Adversarial Transferability of ConvMixer Models

Paper and Code

Sep 19, 2022

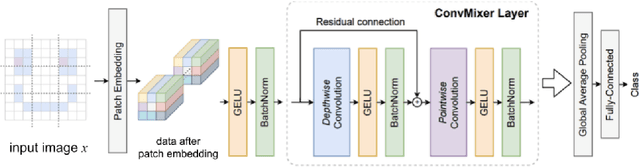

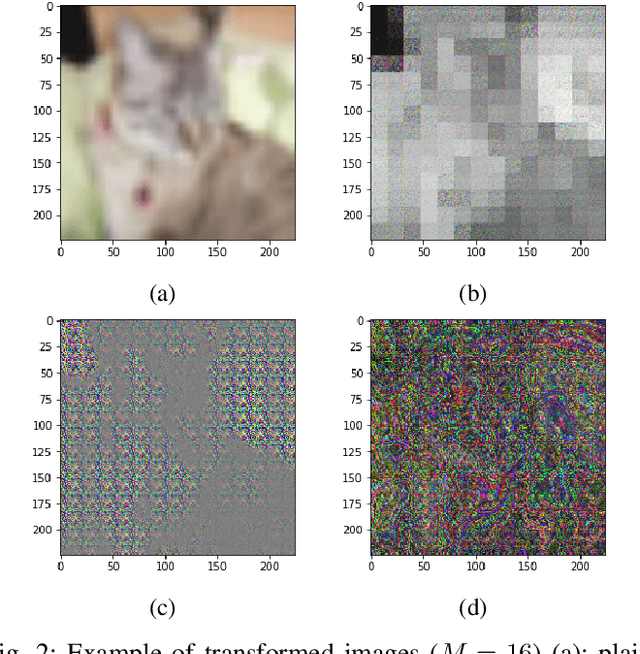

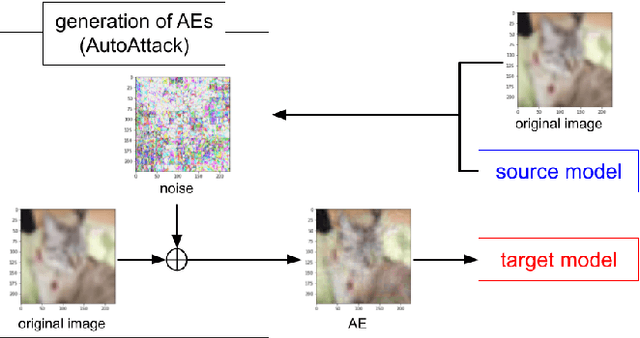

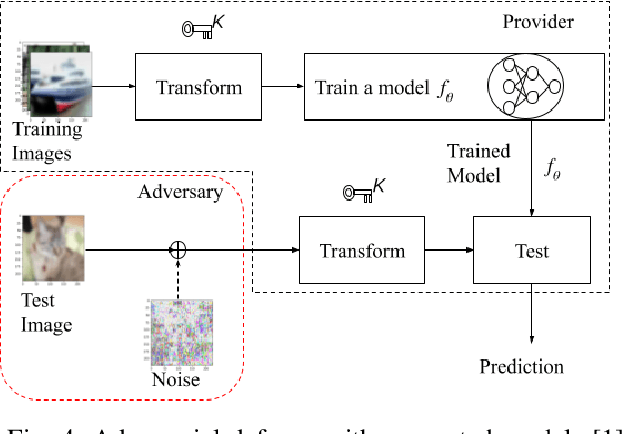

Deep neural networks (DNNs) are well known to be vulnerable to adversarial examples (AEs). In addition, AEs have adversarial transferability, which means AEs generated for a source model can fool another black-box model (target model) with a non-trivial probability. In this paper, we investigate the property of adversarial transferability between models including ConvMixer, which is an isotropic network, for the first time. To objectively verify the property of transferability, the robustness of models is evaluated by using a benchmark attack method called AutoAttack. In an image classification experiment, ConvMixer is confirmed to be weak to adversarial transferability.

* 5 pages, 5 figures, 5 tables. arXiv admin note: substantial text

overlap with arXiv:2209.02997

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge