On Latent Distributions Without Finite Mean in Generative Models

Paper and Code

Jun 05, 2018

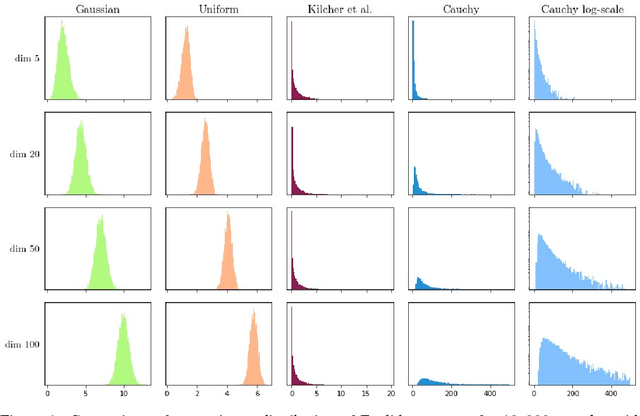

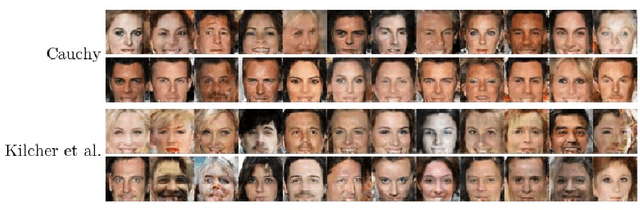

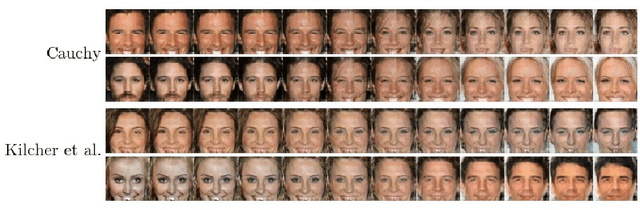

We investigate the properties of multidimensional probability distributions in the context of latent space prior distributions of implicit generative models. Our work revolves around the phenomena arising while decoding linear interpolations between two random latent vectors -- regions of latent space in close proximity to the origin of the space are sampled causing distribution mismatch. We show that due to the Central Limit Theorem, this region is almost never sampled during the training process. As a result, linear interpolations may generate unrealistic data and their usage as a tool to check quality of the trained model is questionable. We propose to use multidimensional Cauchy distribution as the latent prior. Cauchy distribution does not satisfy the assumptions of the CLT and has a number of properties that allow it to work well in conjunction with linear interpolations. We also provide two general methods of creating non-linear interpolations that are easily applicable to a large family of common latent distributions. Finally we empirically analyze the quality of data generated from low-probability-mass regions for the DCGAN model on the CelebA dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge