On Inferring Training Data Attributes in Machine Learning Models

Paper and Code

Aug 28, 2019

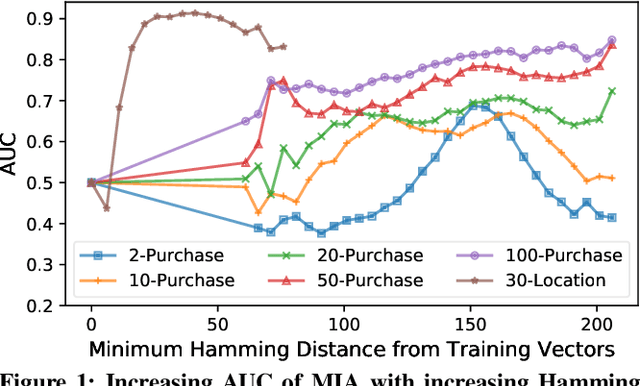

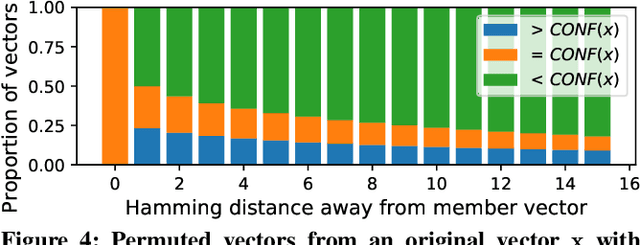

A number of recent works have demonstrated that API access to machine learning models leaks information about the dataset records used to train the models. Further, the work of \cite{somesh-overfit} shows that such membership inference attacks (MIAs) may be sufficient to construct a stronger breed of attribute inference attacks (AIAs), which given a partial view of a record can guess the missing attributes. In this work, we show (to the contrary) that MIA may not be sufficient to build a successful AIA. This is because the latter requires the ability to distinguish between similar records (differing only in a few attributes), and, as we demonstrate, the current breed of MIA are unsuccessful in distinguishing member records from similar non-member records. We thus propose a relaxed notion of AIA, whose goal is to only approximately guess the missing attributes and argue that such an attack is more likely to be successful, if MIA is to be used as a subroutine for inferring training record attributes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge