Omnidirectional Images as Moving Camera Videos

Paper and Code

May 21, 2020

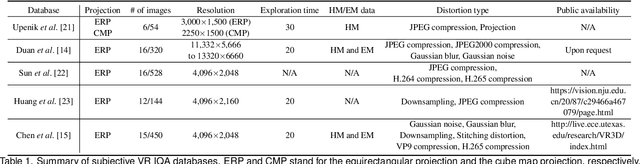

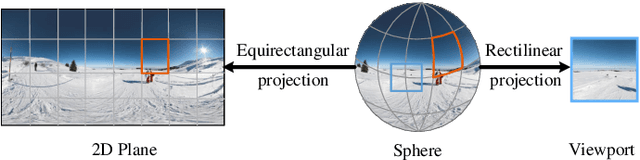

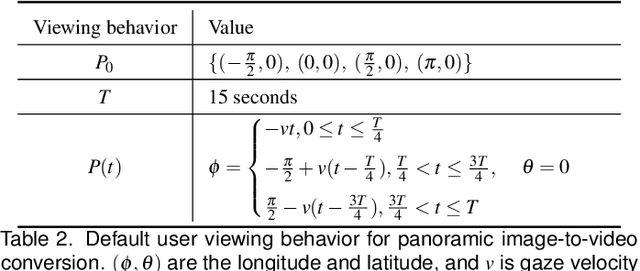

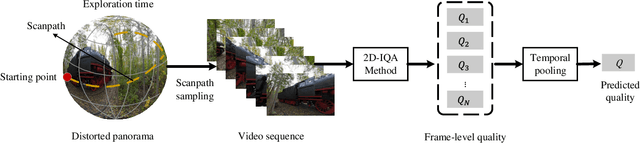

Omnidirectional images (also referred to as static 360{\deg} panoramas) impose viewing conditions much different from those of regular 2D images. A natural question arises: how do humans perceive image distortions in immersive virtual reality (VR) environments? We argue that, apart from the distorted panorama itself, three types of viewing behavior governed by VR conditions are crucial in determining its perceived quality: starting point, exploration time, and scanpath. In this paper, we propose a principled computational framework for objective quality assessment of 360{\deg} images, which embodies the threefold behavior in a delightful way. Specifically, we first transform an omnidirectional image to several video representations using viewing behavior of different users. We then leverage the recent advances in full-reference 2D image/video quality assessment to compute the perceived quality of the panorama. We construct a set of specific quality measures within the proposed framework, and demonstrate their promises on two VR quality databases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge