Off-the-Shelf Unsupervised NMT

Paper and Code

Nov 06, 2018

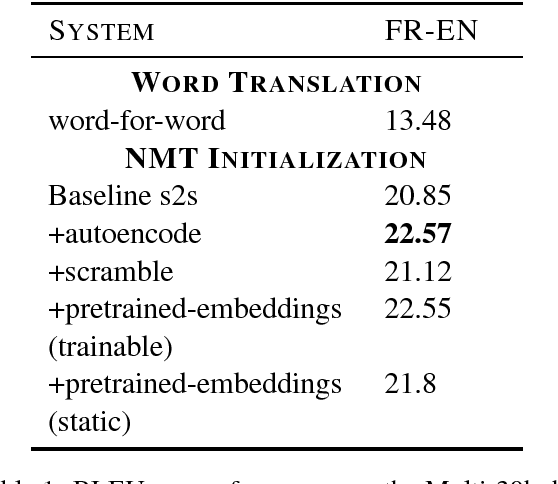

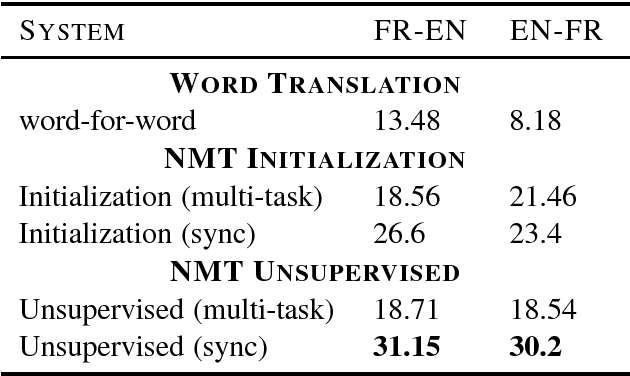

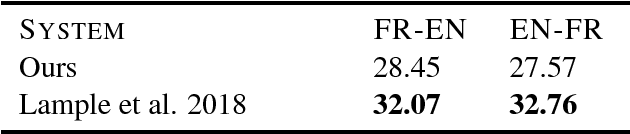

We frame unsupervised machine translation (MT) in the context of multi-task learning (MTL), combining insights from both directions. We leverage off-the-shelf neural MT architectures to train unsupervised MT models with no parallel data and show that such models can achieve reasonably good performance, competitive with models purpose-built for unsupervised MT. Finally, we propose improvements that allow us to apply our models to English-Turkish, a truly low-resource language pair.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge