Non-Rigid Neural Radiance Fields: Reconstruction and Novel View Synthesis of a Deforming Scene from Monocular Video

Paper and Code

Dec 23, 2020

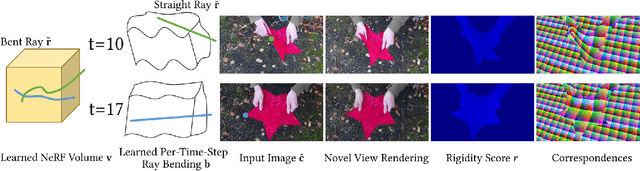

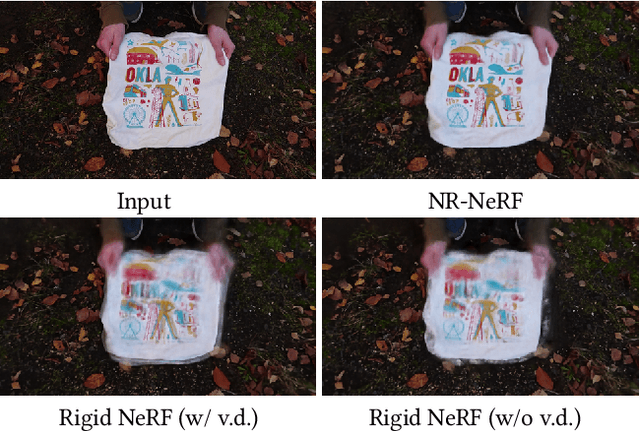

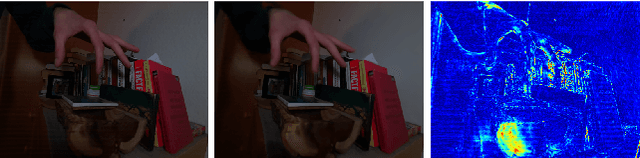

In this tech report, we present the current state of our ongoing work on reconstructing Neural Radiance Fields (NERF) of general non-rigid scenes via ray bending. Non-rigid NeRF (NR-NeRF) takes RGB images of a deforming object (e.g., from a monocular video) as input and then learns a geometry and appearance representation that not only allows to reconstruct the input sequence but also to re-render any time step into novel camera views with high fidelity. In particular, we show that a consumer-grade camera is sufficient to synthesize convincing bullet-time videos of short and simple scenes. In addition, the resulting representation enables correspondence estimation across views and time, and provides rigidity scores for each point in the scene. We urge the reader to watch the supplemental videos for qualitative results. We will release our code.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge