Non-PSD Matrix Sketching with Applications to Regression and Optimization

Paper and Code

Jun 16, 2021

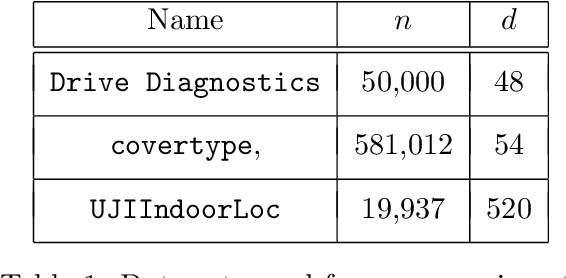

A variety of dimensionality reduction techniques have been applied for computations involving large matrices. The underlying matrix is randomly compressed into a smaller one, while approximately retaining many of its original properties. As a result, much of the expensive computation can be performed on the small matrix. The sketching of positive semidefinite (PSD) matrices is well understood, but there are many applications where the related matrices are not PSD, including Hessian matrices in non-convex optimization and covariance matrices in regression applications involving complex numbers. In this paper, we present novel dimensionality reduction methods for non-PSD matrices, as well as their ``square-roots", which involve matrices with complex entries. We show how these techniques can be used for multiple downstream tasks. In particular, we show how to use the proposed matrix sketching techniques for both convex and non-convex optimization, $\ell_p$-regression for every $1 \leq p \leq \infty$, and vector-matrix-vector queries.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge