NeuroADDA: Active Discriminative Domain Adaptation in Connectomic

Paper and Code

Mar 08, 2025

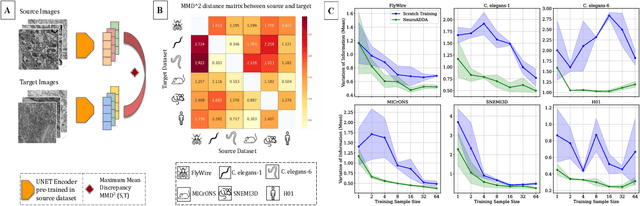

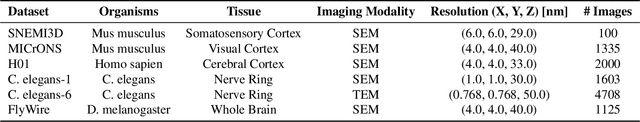

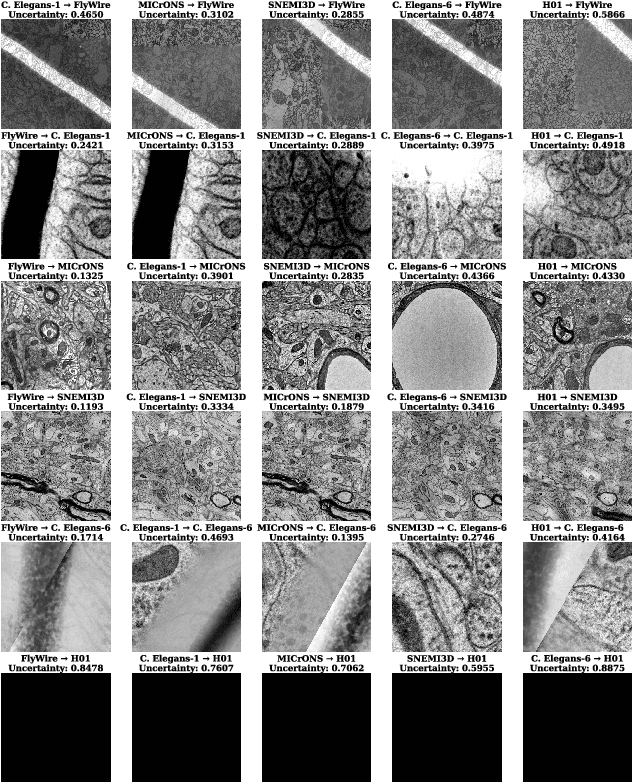

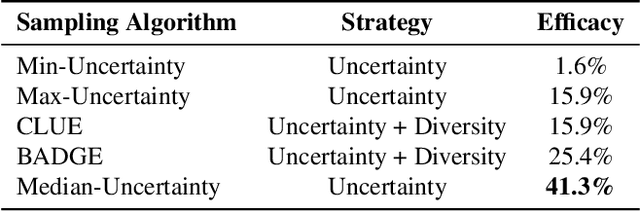

Training segmentation models from scratch has been the standard approach for new electron microscopy connectomics datasets. However, leveraging pretrained models from existing datasets could improve efficiency and performance in constrained annotation budget. In this study, we investigate domain adaptation in connectomics by analyzing six major datasets spanning different organisms. We show that, Maximum Mean Discrepancy (MMD) between neuron image distributions serves as a reliable indicator of transferability, and identifies the optimal source domain for transfer learning. Building on this, we introduce NeuroADDA, a method that combines optimal domain selection with source-free active learning to effectively adapt pretrained backbones to a new dataset. NeuroADDA consistently outperforms training from scratch across diverse datasets and fine-tuning sample sizes, with the largest gain observed at $n=4$ samples with a 25-67\% reduction in Variation of Information. Finally, we show that our analysis of distributional differences among neuron images from multiple species in a learned feature space reveals that these domain "distances" correlate with phylogenetic distance among those species.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge