Neural Networks Versus Conventional Filters for Inertial-Sensor-based Attitude Estimation

Paper and Code

Jun 03, 2020

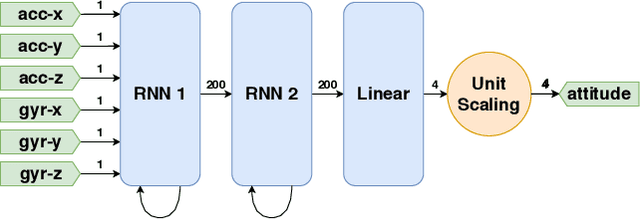

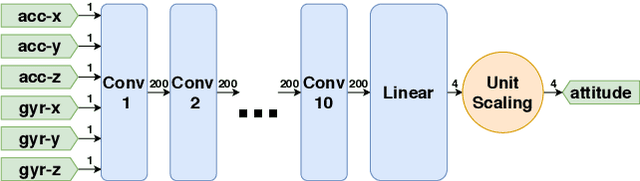

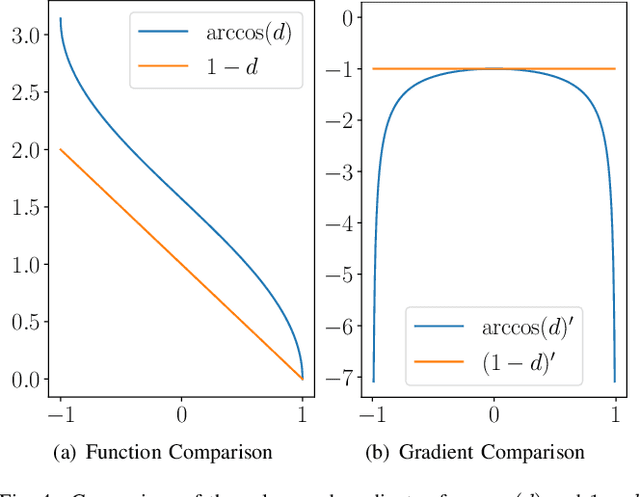

Inertial measurement units are commonly used to estimate the attitude of moving objects. Numerous nonlinear filter approaches have been proposed for solving the inherent sensor fusion problem. However, when a large range of different dynamic and static rotational and translational motions is considered, the attainable accuracy is limited by the need for situation-dependent adjustment of accelerometer and gyroscope fusion weights. We investigate to what extent these limitations can be overcome by means of artificial neural networks and how much domain-specific optimization of the neural network model is required to outperform the conventional filter solution. A diverse set of motion recordings with a marker-based optical ground truth is used for performance evaluation and comparison. The proposed neural networks are found to outperform the conventional filter across all motions only if domain-specific optimizations are introduced. We conclude that they are a promising tool for inertial-sensor-based real-time attitude estimation, but both expert knowledge and rich datasets are required to achieve top performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge