Neural Chaos: A Spectral Stochastic Neural Operator

Paper and Code

Feb 17, 2025

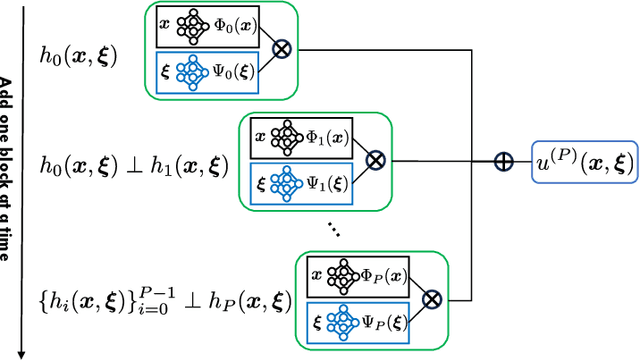

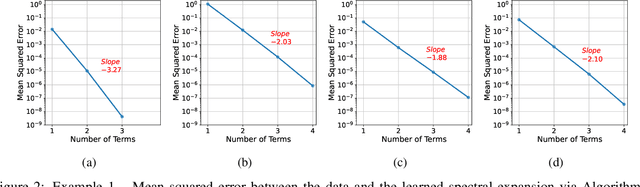

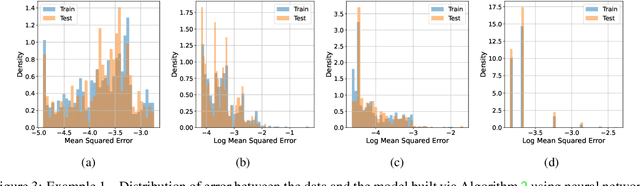

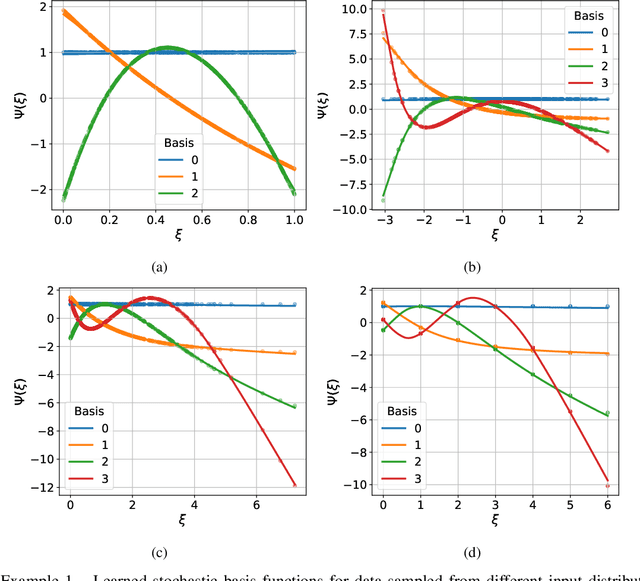

Building surrogate models with uncertainty quantification capabilities is essential for many engineering applications where randomness, such as variability in material properties, is unavoidable. Polynomial Chaos Expansion (PCE) is widely recognized as a to-go method for constructing stochastic solutions in both intrusive and non-intrusive ways. Its application becomes challenging, however, with complex or high-dimensional processes, as achieving accuracy requires higher-order polynomials, which can increase computational demands and or the risk of overfitting. Furthermore, PCE requires specialized treatments to manage random variables that are not independent, and these treatments may be problem-dependent or may fail with increasing complexity. In this work, we adopt the spectral expansion formalism used in PCE; however, we replace the classical polynomial basis functions with neural network (NN) basis functions to leverage their expressivity. To achieve this, we propose an algorithm that identifies NN-parameterized basis functions in a purely data-driven manner, without any prior assumptions about the joint distribution of the random variables involved, whether independent or dependent. The proposed algorithm identifies each NN-parameterized basis function sequentially, ensuring they are orthogonal with respect to the data distribution. The basis functions are constructed directly on the joint stochastic variables without requiring a tensor product structure. This approach may offer greater flexibility for complex stochastic models, while simplifying implementation compared to the tensor product structures typically used in PCE to handle random vectors. We demonstrate the effectiveness of the proposed scheme through several numerical examples of varying complexity and provide comparisons with classical PCE.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge