NeuFair: Neural Network Fairness Repair with Dropout

Paper and Code

Jul 05, 2024

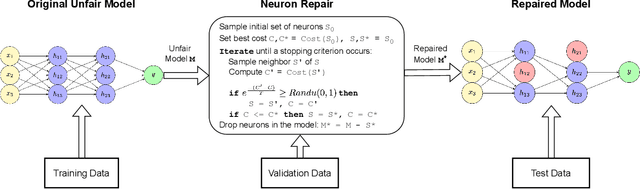

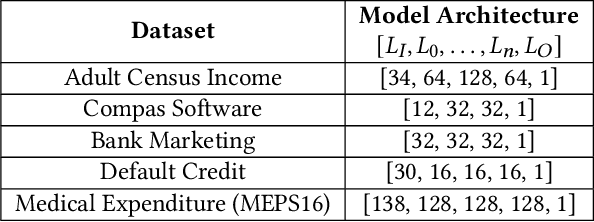

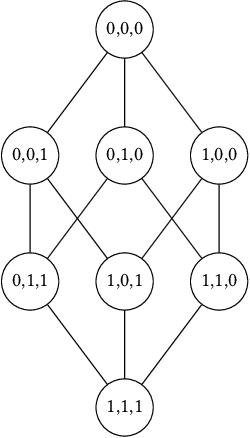

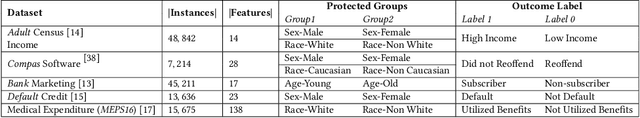

This paper investigates the neural dropout method as a post-processing bias mitigation for deep neural networks (DNNs). Neural-driven software solutions are increasingly applied in socially critical domains with significant fairness implications. While neural networks are exceptionally good at finding statistical patterns from data, they are notorious for overfitting to the training datasets that may encode and amplify existing biases from the historical data. Existing bias mitigation algorithms often require either modifying the input dataset or modifying the learning algorithms. We posit that the prevalent dropout methods that prevent over-fitting during training by randomly dropping neurons may be an effective and less intrusive approach to improve fairness of pre-trained DNNs. However, finding the ideal set of neurons to drop is a combinatorial problem. We propose NeuFair, a family of post-processing randomized algorithms that mitigate unfairness in pre-trained DNNs. Our randomized search is guided by an objective to minimize discrimination while maintaining the model utility. We show that our design of randomized algorithms provides statistical guarantees on finding optimal solutions, and we empirically evaluate the efficacy and efficiency of NeuFair in improving fairness, with minimal or no performance degradation. Our results show that NeuFair improves fairness by up to 69% and outperforms state-of-the-art post-processing bias techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge