Network Compression via Central Filter

Paper and Code

Dec 13, 2021

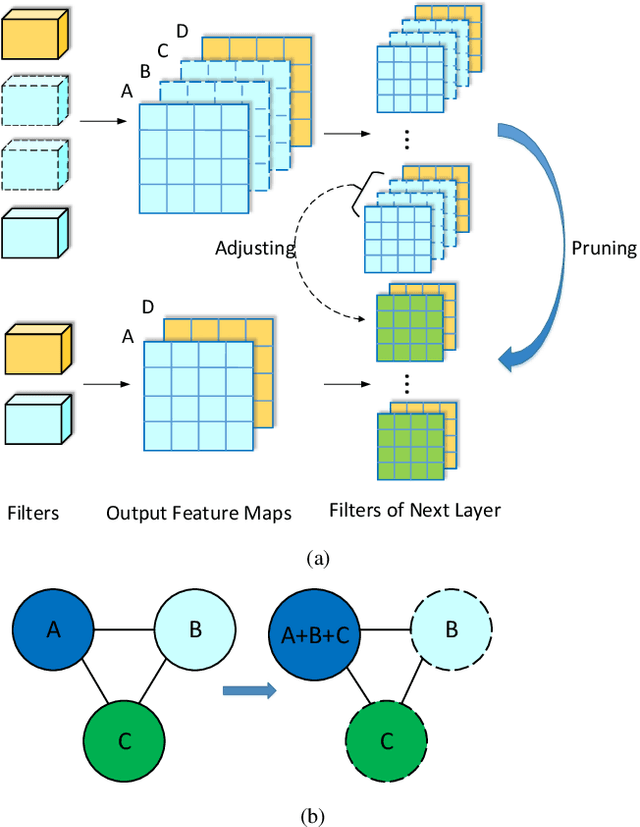

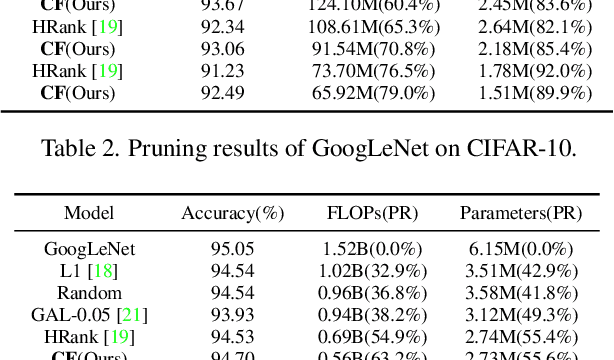

Neural network pruning has remarkable performance for reducing the complexity of deep network models. Recent network pruning methods usually focused on removing unimportant or redundant filters in the network. In this paper, by exploring the similarities between feature maps, we propose a novel filter pruning method, Central Filter (CF), which suggests that a filter is approximately equal to a set of other filters after appropriate adjustments. Our method is based on the discovery that the average similarity between feature maps changes very little, regardless of the number of input images. Based on this finding, we establish similarity graphs on feature maps and calculate the closeness centrality of each node to select the Central Filter. Moreover, we design a method to directly adjust weights in the next layer corresponding to the Central Filter, effectively minimizing the error caused by pruning. Through experiments on various benchmark networks and datasets, CF yields state-of-the-art performance. For example, with ResNet-56, CF reduces approximately 39.7% of FLOPs by removing 47.1% of the parameters, with even 0.33% accuracy improvement on CIFAR-10. With GoogLeNet, CF reduces approximately 63.2% of FLOPs by removing 55.6% of the parameters, with only a small loss of 0.35% in top-1 accuracy on CIFAR-10. With ResNet-50, CF reduces approximately 47.9% of FLOPs by removing 36.9% of the parameters, with only a small loss of 1.07% in top-1 accuracy on ImageNet. The codes can be available at https://github.com/8ubpshLR23/Central-Filter.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge