Neko: a Library for Exploring Neuromorphic Learning Rules

Paper and Code

May 01, 2021

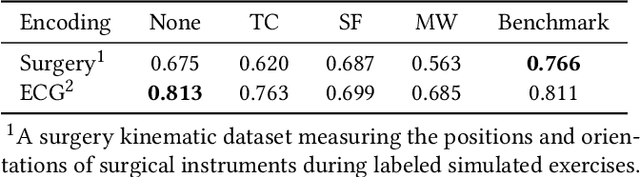

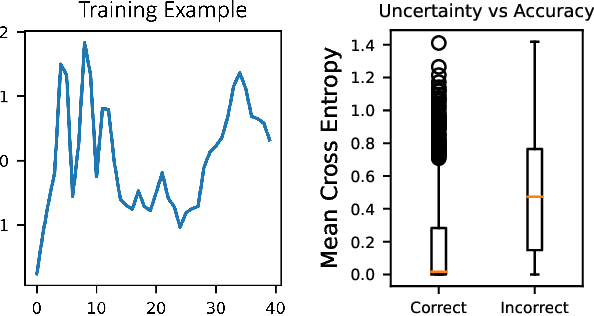

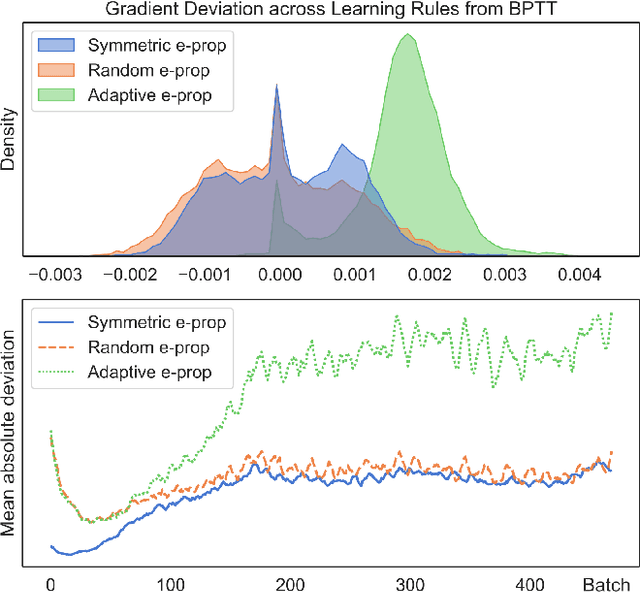

The field of neuromorphic computing is in a period of active exploration. While many tools have been developed to simulate neuronal dynamics or convert deep networks to spiking models, general software libraries for learning rules remain underexplored. This is partly due to the diverse, challenging nature of efforts to design new learning rules, which range from encoding methods to gradient approximations, from population approaches that mimic the Bayesian brain to constrained learning algorithms deployed on memristor crossbars. To address this gap, we present Neko, a modular, extensible library with a focus on aiding the design of new learning algorithms. We demonstrate the utility of Neko in three exemplar cases: online local learning, probabilistic learning, and analog on-device learning. Our results show that Neko can replicate the state-of-the-art algorithms and, in one case, lead to significant outperformance in accuracy and speed. Further, it offers tools including gradient comparison that can help develop new algorithmic variants. Neko is an open source Python library that supports PyTorch and TensorFlow backends.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge