Multimodal deep learning for mapping forest dominant height by fusing GEDI with earth observation data

Paper and Code

Nov 20, 2023

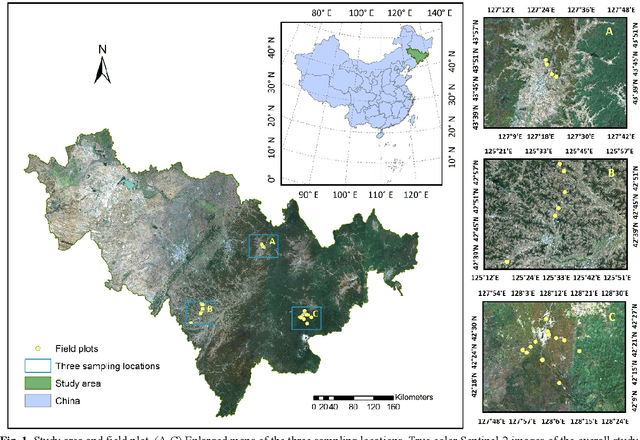

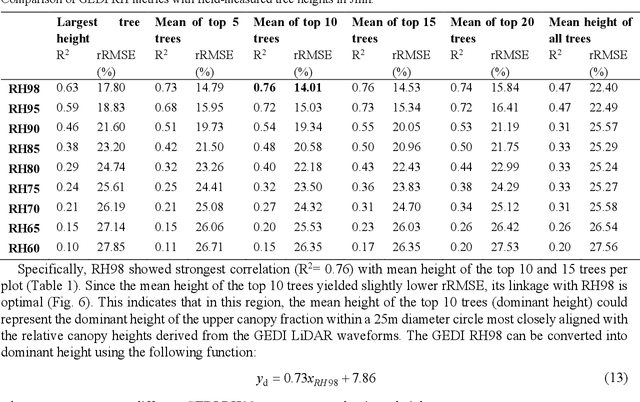

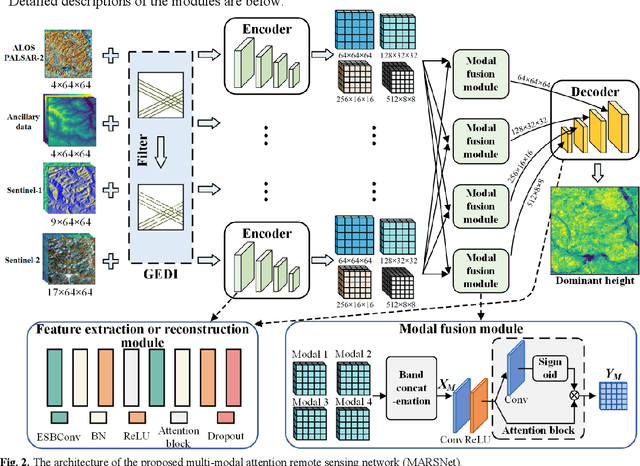

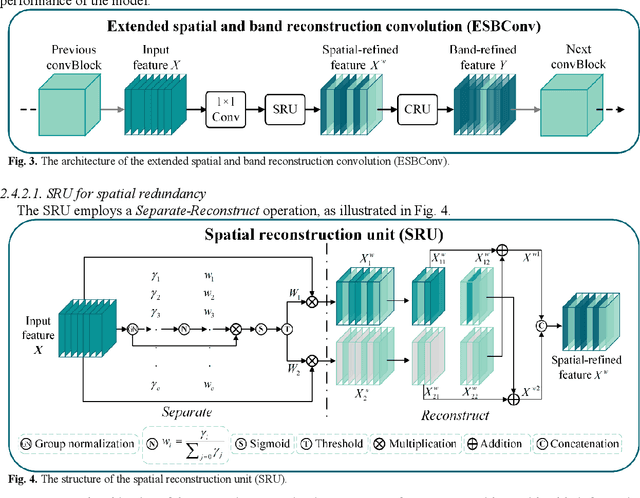

The integration of multisource remote sensing data and deep learning models offers new possibilities for accurately mapping high spatial resolution forest height. We found that GEDI relative heights (RH) metrics exhibited strong correlation with the mean of the top 10 highest trees (dominant height) measured in situ at the corresponding footprint locations. Consequently, we proposed a novel deep learning framework termed the multi-modal attention remote sensing network (MARSNet) to estimate forest dominant height by extrapolating dominant height derived from GEDI, using Setinel-1 data, ALOS-2 PALSAR-2 data, Sentinel-2 optical data and ancillary data. MARSNet comprises separate encoders for each remote sensing data modality to extract multi-scale features, and a shared decoder to fuse the features and estimate height. Using individual encoders for each remote sensing imagery avoids interference across modalities and extracts distinct representations. To focus on the efficacious information from each dataset, we reduced the prevalent spatial and band redundancies in each remote sensing data by incorporating the extended spatial and band reconstruction convolution modules in the encoders. MARSNet achieved commendable performance in estimating dominant height, with an R2 of 0.62 and RMSE of 2.82 m, outperforming the widely used random forest approach which attained an R2 of 0.55 and RMSE of 3.05 m. Finally, we applied the trained MARSNet model to generate wall-to-wall maps at 10 m resolution for Jilin, China. Through independent validation using field measurements, MARSNet demonstrated an R2 of 0.58 and RMSE of 3.76 m, compared to 0.41 and 4.37 m for the random forest baseline. Our research demonstrates the effectiveness of a multimodal deep learning approach fusing GEDI with SAR and passive optical imagery for enhancing the accuracy of high resolution dominant height estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge