Multi-modal Self-Supervision from Generalized Data Transformations

Paper and Code

Mar 09, 2020

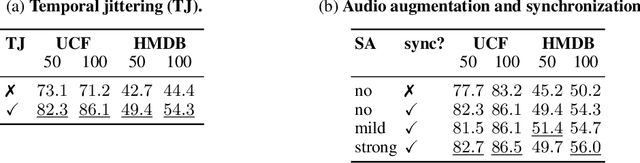

Self-supervised learning has advanced rapidly, with several results beating supervised models for pre-training feature representations. While the focus of most of these works has been new loss functions or tasks, little attention has been given to the data transformations that build the foundation of learning representations with desirable invariances. In this work, we introduce a framework for multi-modal data transformations that preserve semantics and induce the learning of high-level representations across modalities. We do this by combining two steps: inter-modality slicing, and intra-modality augmentation. Using a contrastive loss as the training task, we show that choosing the right transformations is key and that our method yields state-of-the-art results on downstream video and audio classification tasks such as HMDB51, UCF101 and DCASE2014 with Kinetics-400 pretraining.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge