Multi-Modal Hybrid Architecture for Pedestrian Action Prediction

Paper and Code

Nov 16, 2020

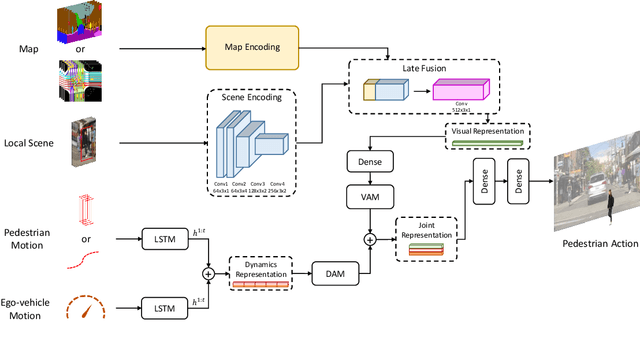

Pedestrian behavior prediction is one of the major challenges for intelligent driving systems in urban environments. Pedestrians often exhibit a wide range of behaviors and adequate interpretations of those depend on various sources of information such as pedestrian appearance, states of other road users, the environment layout, etc. To address this problem, we propose a novel multi-modal prediction algorithm that incorporates different sources of information captured from the environment to predict future crossing actions of pedestrians. The proposed model benefits from a hybrid learning architecture consisting of feedforward and recurrent networks for analyzing visual features of the environment and dynamics of the scene. Using the existing 2D pedestrian behavior benchmarks and a newly annotated 3D driving dataset, we show that our proposed model achieves state-of-the-art performance in pedestrian crossing prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge