MSCDA: Multi-level Semantic-guided Contrast Improves Unsupervised Domain Adaptation for Breast MRI Segmentation in Small Datasets

Paper and Code

Jan 04, 2023

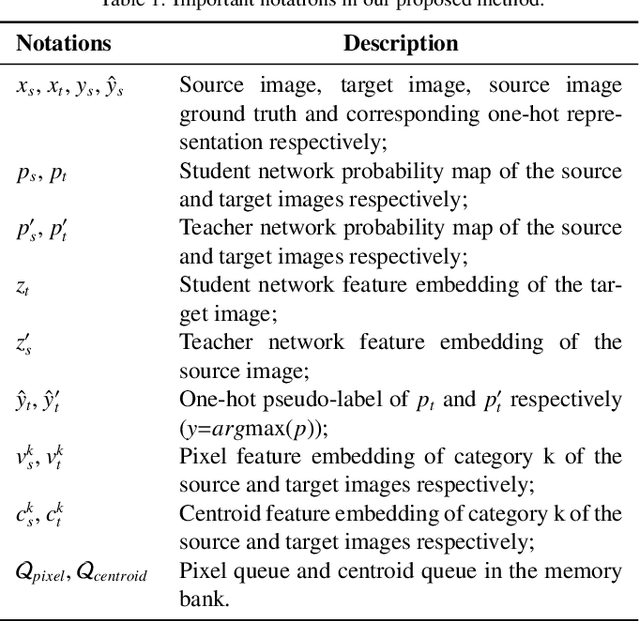

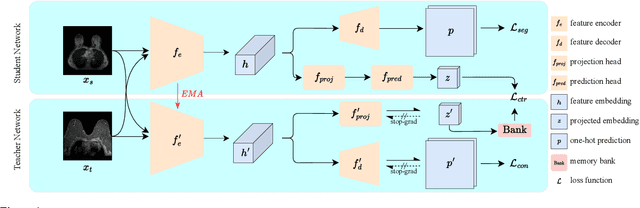

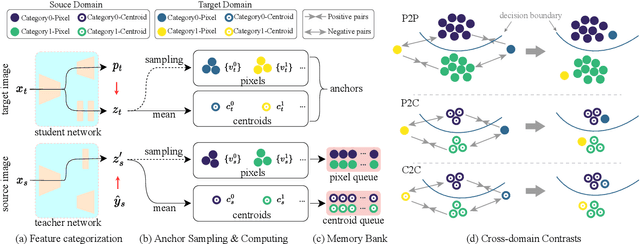

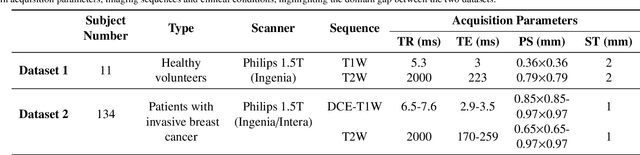

Deep learning (DL) applied to breast tissue segmentation in magnetic resonance imaging (MRI) has received increased attention in the last decade, however, the domain shift which arises from different vendors, acquisition protocols, and biological heterogeneity, remains an important but challenging obstacle on the path towards clinical implementation. Recently, unsupervised domain adaptation (UDA) methods have attempted to mitigate this problem by incorporating self-training with contrastive learning. To better exploit the underlying semantic information of the image at different levels, we propose a Multi-level Semantic-guided Contrastive Domain Adaptation (MSCDA) framework to align the feature representation between domains. In particular, we extend the contrastive loss by incorporating pixel-to-pixel, pixel-to-centroid, and centroid-to-centroid contrasts to integrate semantic information of images. We utilize a category-wise cross-domain sampling strategy to sample anchors from target images and build a hybrid memory bank to store samples from source images. Two breast MRI datasets were retrospectively collected: The source dataset contains non-contrast MRI examinations from 11 healthy volunteers and the target dataset contains contrast-enhanced MRI examinations of 134 invasive breast cancer patients. We set up experiments from source T2W image to target dynamic contrast-enhanced (DCE)-T1W image (T2W-to-T1W) and from source T1W image to target T2W image (T1W-to-T2W). The proposed method achieved Dice similarity coefficient (DSC) of 89.2\% and 84.0\% in T2W-to-T1W and T1W-to-T2W, respectively, outperforming state-of-the-art methods. Notably, good performance is still achieved with a smaller source dataset, proving that our framework is label-efficient.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge