MobilePhys: Personalized Mobile Camera-Based Contactless Physiological Sensing

Paper and Code

Jan 11, 2022

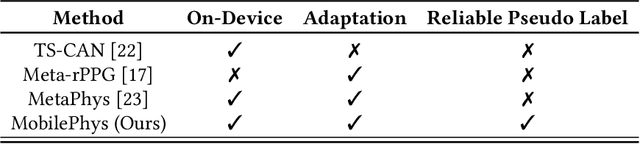

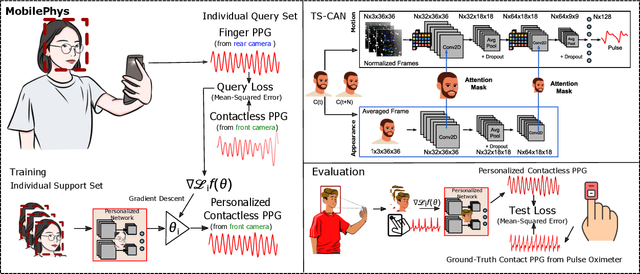

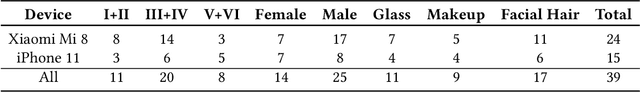

Camera-based contactless photoplethysmography refers to a set of popular techniques for contactless physiological measurement. The current state-of-the-art neural models are typically trained in a supervised manner using videos accompanied by gold standard physiological measurements. However, they often generalize poorly out-of-domain examples (i.e., videos that are unlike those in the training set). Personalizing models can help improve model generalizability, but many personalization techniques still require some gold standard data. To help alleviate this dependency, in this paper, we present a novel mobile sensing system called MobilePhys, the first mobile personalized remote physiological sensing system, that leverages both front and rear cameras on a smartphone to generate high-quality self-supervised labels for training personalized contactless camera-based PPG models. To evaluate the robustness of MobilePhys, we conducted a user study with 39 participants who completed a set of tasks under different mobile devices, lighting conditions/intensities, motion tasks, and skin types. Our results show that MobilePhys significantly outperforms the state-of-the-art on-device supervised training and few-shot adaptation methods. Through extensive user studies, we further examine how does MobilePhys perform in complex real-world settings. We envision that calibrated or personalized camera-based contactless PPG models generated from our proposed dual-camera mobile sensing system will open the door for numerous future applications such as smart mirrors, fitness and mobile health applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge