Mixtures of Laplace Approximations for Improved Post-Hoc Uncertainty in Deep Learning

Paper and Code

Nov 05, 2021

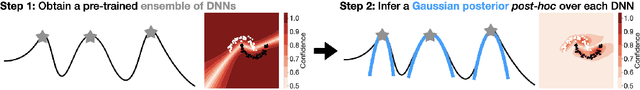

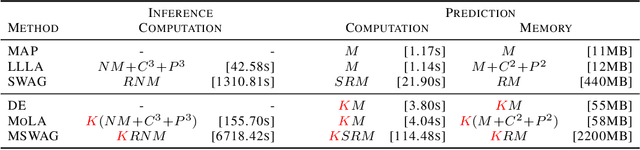

Deep neural networks are prone to overconfident predictions on outliers. Bayesian neural networks and deep ensembles have both been shown to mitigate this problem to some extent. In this work, we aim to combine the benefits of the two approaches by proposing to predict with a Gaussian mixture model posterior that consists of a weighted sum of Laplace approximations of independently trained deep neural networks. The method can be used post hoc with any set of pre-trained networks and only requires a small computational and memory overhead compared to regular ensembles. We theoretically validate that our approach mitigates overconfidence "far away" from the training data and empirically compare against state-of-the-art baselines on standard uncertainty quantification benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge