Minimax Manifold Estimation

Paper and Code

Sep 28, 2011

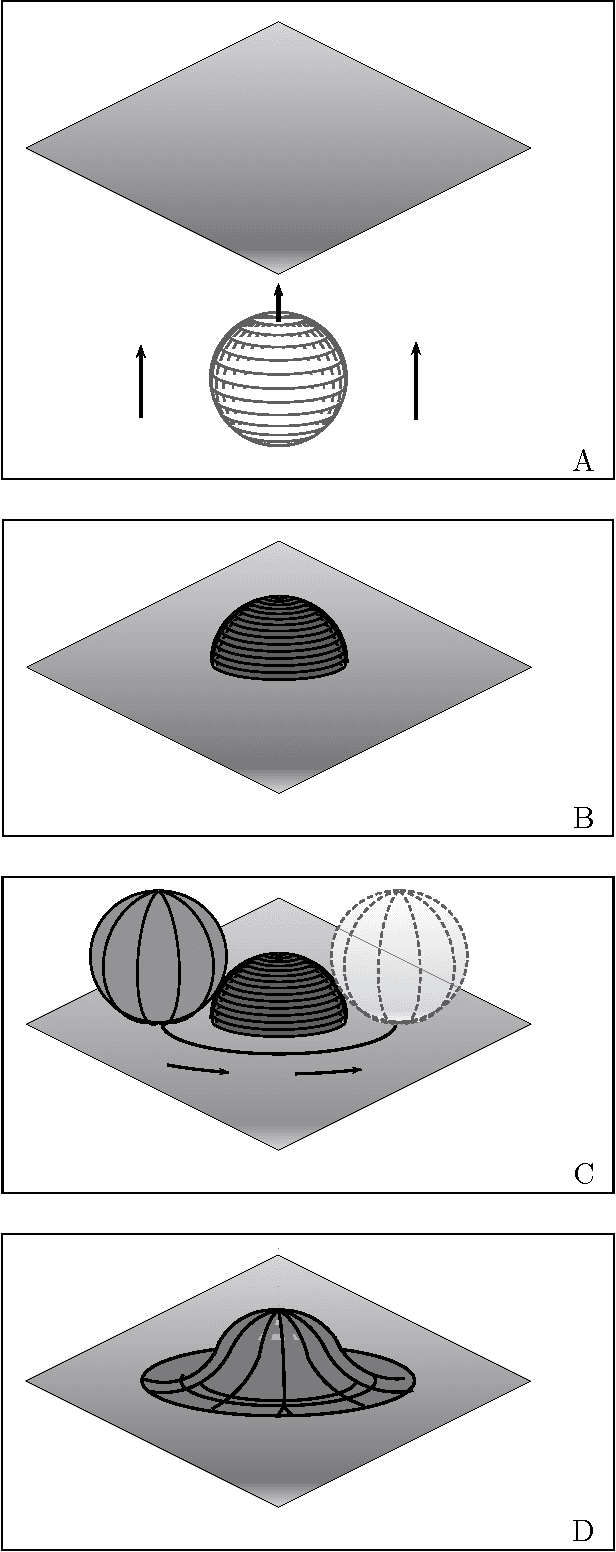

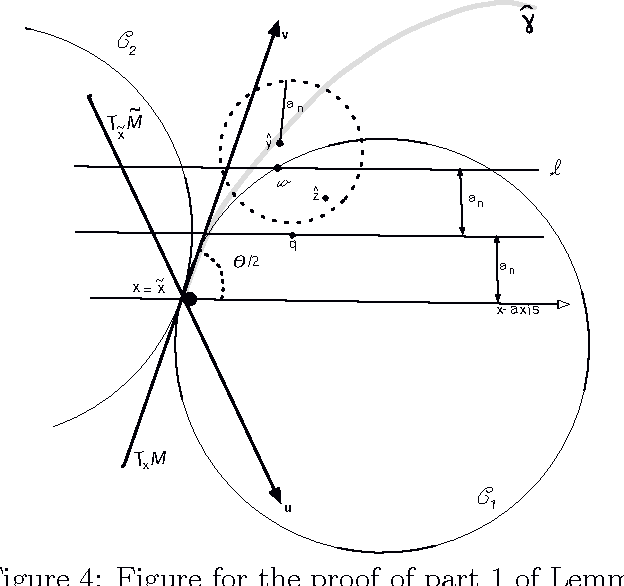

We find the minimax rate of convergence in Hausdorff distance for estimating a manifold M of dimension d embedded in R^D given a noisy sample from the manifold. We assume that the manifold satisfies a smoothness condition and that the noise distribution has compact support. We show that the optimal rate of convergence is n^{-2/(2+d)}. Thus, the minimax rate depends only on the dimension of the manifold, not on the dimension of the space in which M is embedded.

* journal submission, revision with some errors corrected

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge