Minimal model of permutation symmetry in unsupervised learning

Paper and Code

Apr 30, 2019

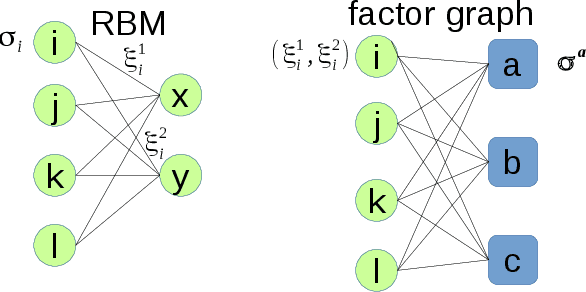

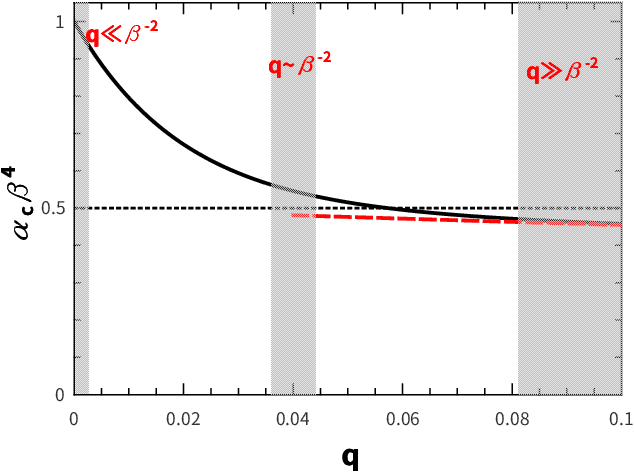

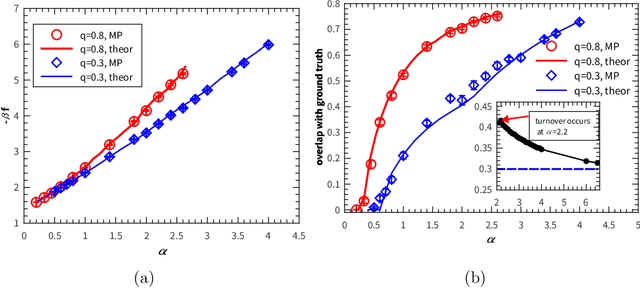

Permutation of any two hidden units yields invariant properties in typical deep generative neural networks. This permutation symmetry plays an important role in understanding the computation performance of a broad class of neural networks with two or more hidden units. However, a theoretical study of the permutation symmetry is still lacking. Here, we propose a minimal model with only two hidden units in a restricted Boltzmann machine, which aims to address how the permutation symmetry affects the critical learning data size at which the concept-formation (or spontaneous symmetry breaking in physics language) starts, and moreover semi-rigorously prove a conjecture that the critical data size is independent of the number of hidden units once this number is finite. Remarkably, we find that the embedded correlation between two receptive fields of hidden units reduces the critical data size. In particular, the weakly-correlated receptive fields have the benefit of significantly reducing the minimal data size that triggers the transition, given less noisy data. Inspired by the theory, we also propose an efficient fully-distributed algorithm to infer the receptive fields of hidden units. Overall, our results demonstrate that the permutation symmetry is an interesting property that affects the critical data size for computation performances of related learning algorithms. All these effects can be analytically probed based on the minimal model, providing theoretical insights towards understanding unsupervised learning in a more general context.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge