MIMRS: A Survey on Masked Image Modeling in Remote Sensing

Paper and Code

Apr 07, 2025

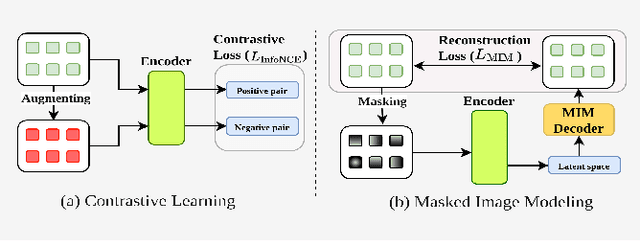

Masked Image Modeling (MIM) is a self-supervised learning technique that involves masking portions of an image, such as pixels, patches, or latent representations, and training models to predict the missing information using the visible context. This approach has emerged as a cornerstone in self-supervised learning, unlocking new possibilities in visual understanding by leveraging unannotated data for pre-training. In remote sensing, MIM addresses challenges such as incomplete data caused by cloud cover, occlusions, and sensor limitations, enabling applications like cloud removal, multi-modal data fusion, and super-resolution. By synthesizing and critically analyzing recent advancements, this survey (MIMRS) is a pioneering effort to chart the landscape of mask image modeling in remote sensing. We highlight state-of-the-art methodologies, applications, and future research directions, providing a foundational review to guide innovation in this rapidly evolving field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge