Metric Learning-enhanced Optimal Transport for Biochemical Regression Domain Adaptation

Paper and Code

Feb 16, 2022

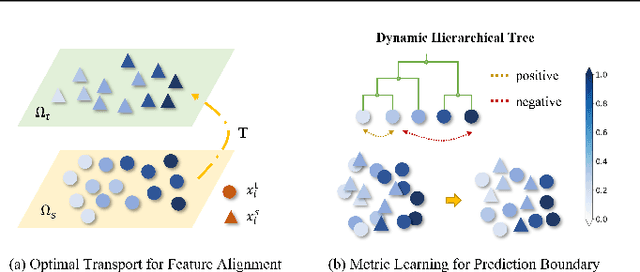

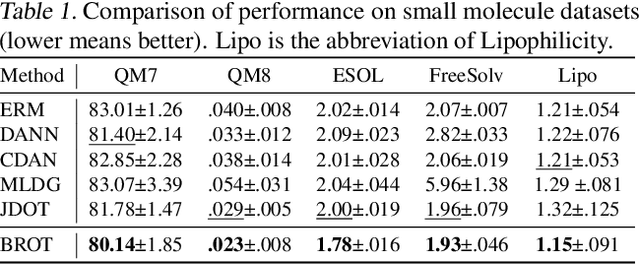

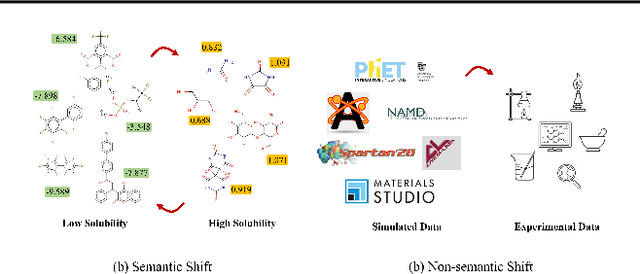

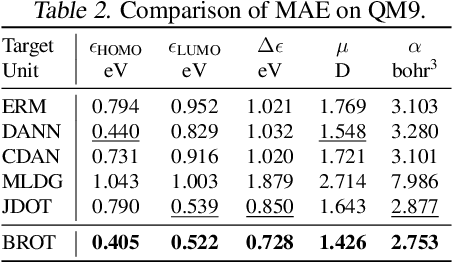

Generalizing knowledge beyond source domains is a crucial prerequisite for many biomedical applications such as drug design and molecular property prediction. To meet this challenge, researchers have used optimal transport (OT) to perform representation alignment between the source and target domains. Yet existing OT algorithms are mainly designed for classification tasks. Accordingly, we consider regression tasks in the unsupervised and semi-supervised settings in this paper. To exploit continuous labels, we propose novel metrics to measure domain distances and introduce a posterior variance regularizer on the transport plan. Further, while computationally appealing, OT suffers from ambiguous decision boundaries and biased local data distributions brought by the mini-batch training. To address those issues, we propose to couple OT with metric learning to yield more robust boundaries and reduce bias. Specifically, we present a dynamic hierarchical triplet loss to describe the global data distribution, where the cluster centroids are progressively adjusted among consecutive iterations. We evaluate our method on both unsupervised and semi-supervised learning tasks in biochemistry. Experiments show the proposed method significantly outperforms state-of-the-art baselines across various benchmark datasets of small molecules and material crystals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge