Meta-Interpolation: Time-Arbitrary Frame Interpolation via Dual Meta-Learning

Paper and Code

Jul 27, 2022

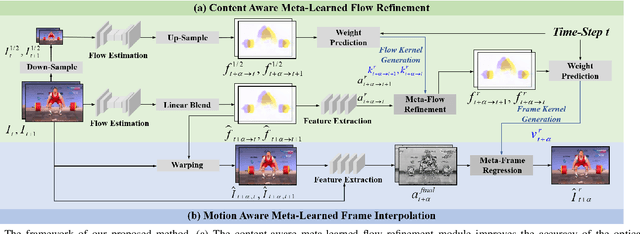

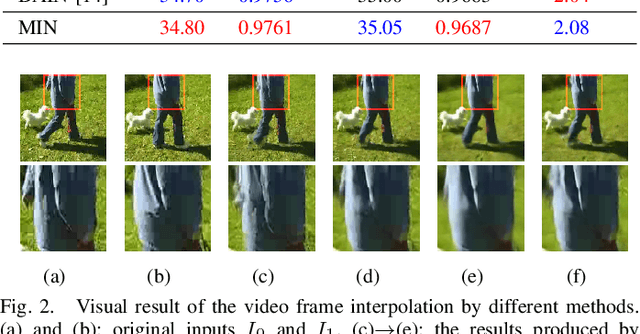

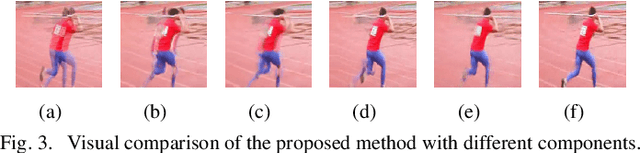

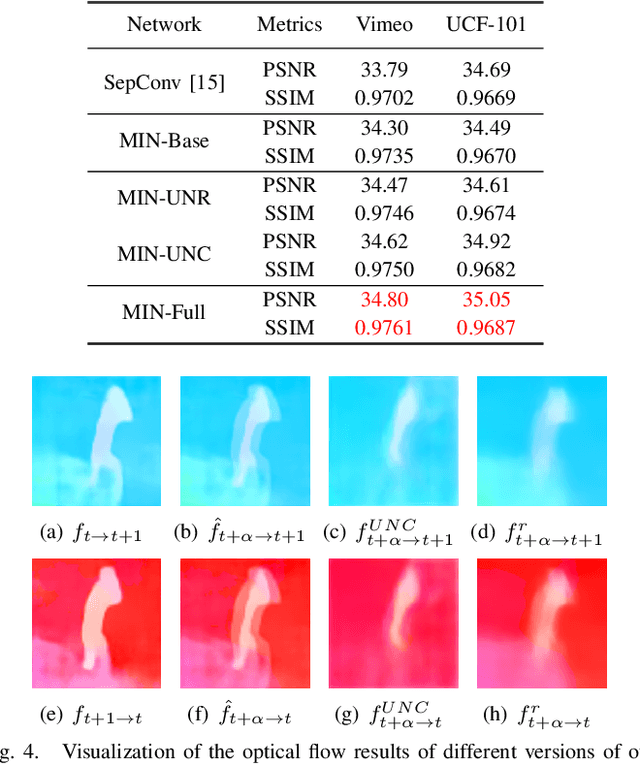

Existing video frame interpolation methods can only interpolate the frame at a given intermediate time-step, e.g. 1/2. In this paper, we aim to explore a more generalized kind of video frame interpolation, that at an arbitrary time-step. To this end, we consider processing different time-steps with adaptively generated convolutional kernels in a unified way with the help of meta-learning. Specifically, we develop a dual meta-learned frame interpolation framework to synthesize intermediate frames with the guidance of context information and optical flow as well as taking the time-step as side information. First, a content-aware meta-learned flow refinement module is built to improve the accuracy of the optical flow estimation based on the down-sampled version of the input frames. Second, with the refined optical flow and the time-step as the input, a motion-aware meta-learned frame interpolation module generates the convolutional kernels for every pixel used in the convolution operations on the feature map of the coarse warped version of the input frames to generate the predicted frame. Extensive qualitative and quantitative evaluations, as well as ablation studies, demonstrate that, via introducing meta-learning in our framework in such a well-designed way, our method not only achieves superior performance to state-of-the-art frame interpolation approaches but also owns an extended capacity to support the interpolation at an arbitrary time-step.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge