Meta Auxiliary Learning for Low-resource Spoken Language Understanding

Paper and Code

Jun 26, 2022

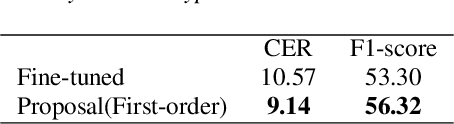

Spoken language understanding (SLU) treats automatic speech recognition (ASR) and natural language understanding (NLU) as a unified task and usually suffers from data scarcity. We exploit an ASR and NLU joint training method based on meta auxiliary learning to improve the performance of low-resource SLU task by only taking advantage of abundant manual transcriptions of speech data. One obvious advantage of such method is that it provides a flexible framework to implement a low-resource SLU training task without requiring access to any further semantic annotations. In particular, a NLU model is taken as label generation network to predict intent and slot tags from texts; a multi-task network trains ASR task and SLU task synchronously from speech; and the predictions of label generation network are delivered to the multi-task network as semantic targets. The efficiency of the proposed algorithm is demonstrated with experiments on the public CATSLU dataset, which produces more suitable ASR hypotheses for the downstream NLU task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge