MergeComp: A Compression Scheduler for Scalable Communication-Efficient Distributed Training

Paper and Code

Mar 28, 2021

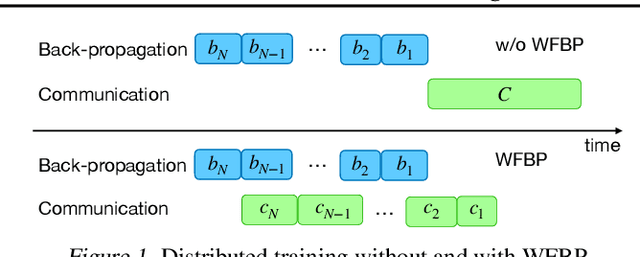

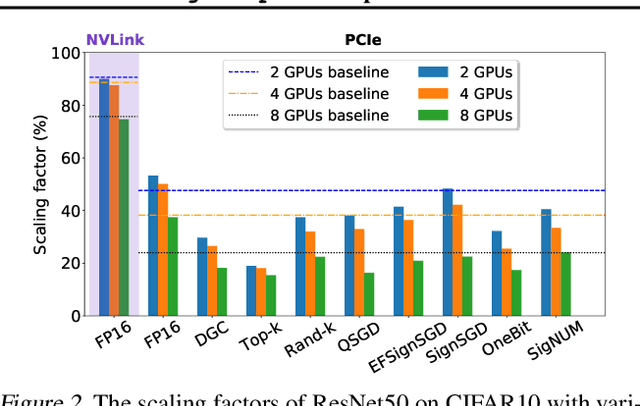

Large-scale distributed training is increasingly becoming communication bound. Many gradient compression algorithms have been proposed to reduce the communication overhead and improve scalability. However, it has been observed that in some cases gradient compression may even harm the performance of distributed training. In this paper, we propose MergeComp, a compression scheduler to optimize the scalability of communication-efficient distributed training. It automatically schedules the compression operations to optimize the performance of compression algorithms without the knowledge of model architectures or system parameters. We have applied MergeComp to nine popular compression algorithms. Our evaluations show that MergeComp can improve the performance of compression algorithms by up to 3.83x without losing accuracy. It can even achieve a scaling factor of distributed training up to 99% over high-speed networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge