Memorized action chunking with Transformers: Imitation learning for vision-based tissue surface scanning

Paper and Code

Nov 06, 2024

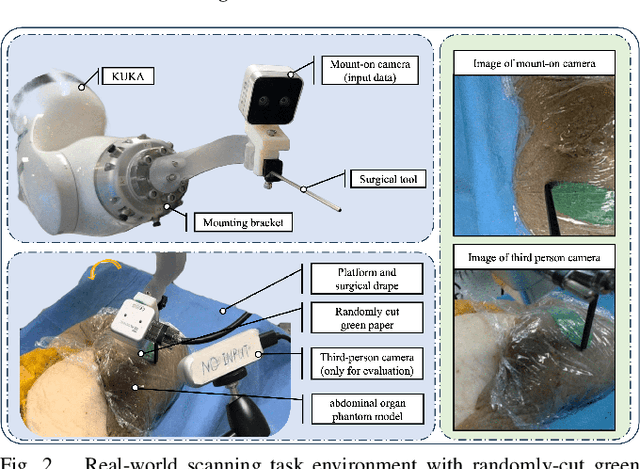

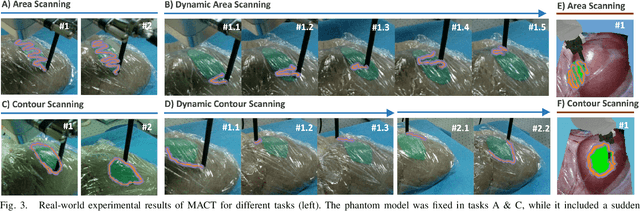

Optical sensing technologies are emerging technologies used in cancer surgeries to ensure the complete removal of cancerous tissue. While point-wise assessment has many potential applications, incorporating automated large area scanning would enable holistic tissue sampling. However, such scanning tasks are challenging due to their long-horizon dependency and the requirement for fine-grained motion. To address these issues, we introduce Memorized Action Chunking with Transformers (MACT), an intuitive yet efficient imitation learning method for tissue surface scanning tasks. It utilizes a sequence of past images as historical information to predict near-future action sequences. In addition, hybrid temporal-spatial positional embeddings were employed to facilitate learning. In various simulation settings, MACT demonstrated significant improvements in contour scanning and area scanning over the baseline model. In real-world testing, with only 50 demonstration trajectories, MACT surpassed the baseline model by achieving a 60-80% success rate on all scanning tasks. Our findings suggest that MACT is a promising model for adaptive scanning in surgical settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge