Measuring and Manipulating Knowledge Representations in Language Models

Paper and Code

Apr 03, 2023

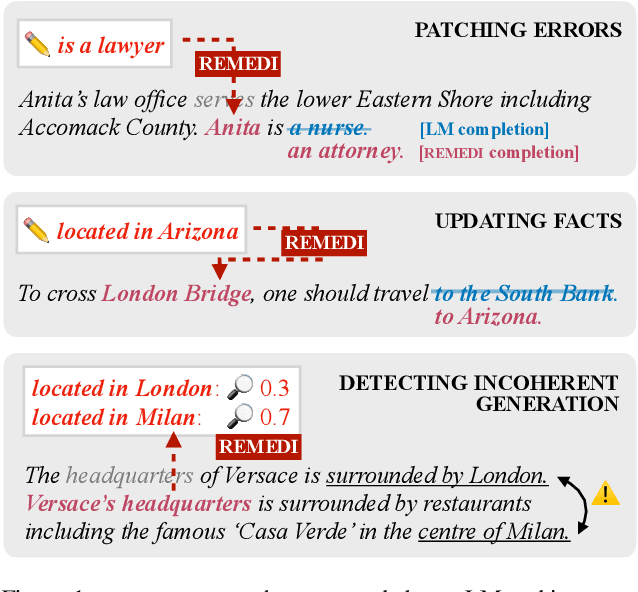

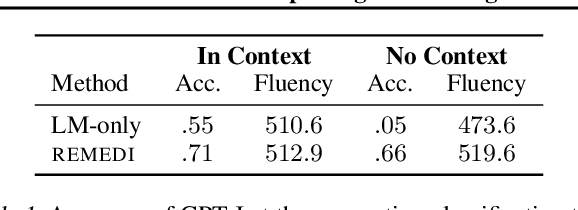

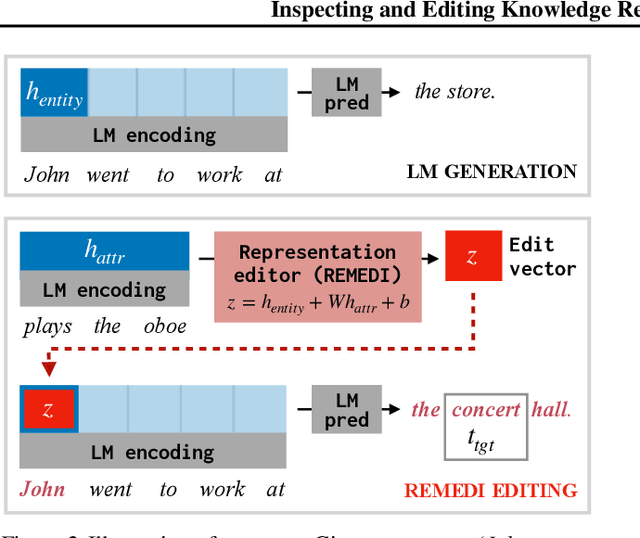

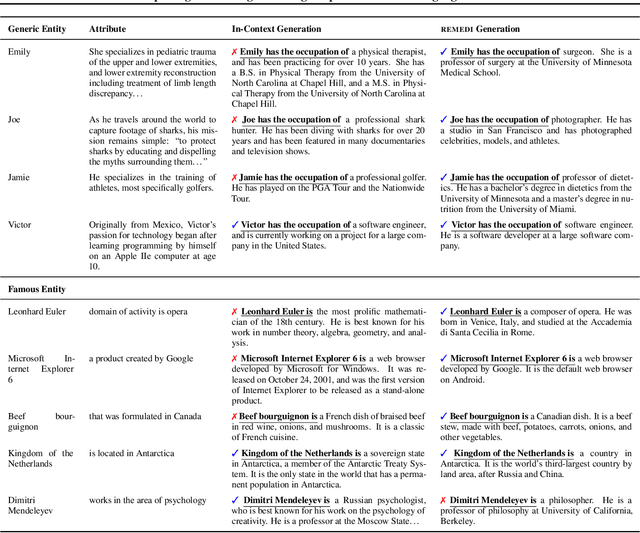

Neural language models (LMs) represent facts about the world described by text. Sometimes these facts derive from training data (in most LMs, a representation of the word banana encodes the fact that bananas are fruits). Sometimes facts derive from input text itself (a representation of the sentence "I poured out the bottle" encodes the fact that the bottle became empty). Tools for inspecting and modifying LM fact representations would be useful almost everywhere LMs are used: making it possible to update them when the world changes, to localize and remove sources of bias, and to identify errors in generated text. We describe REMEDI, an approach for querying and modifying factual knowledge in LMs. REMEDI learns a map from textual queries to fact encodings in an LM's internal representation system. These encodings can be used as knowledge editors: by adding them to LM hidden representations, we can modify downstream generation to be consistent with new facts. REMEDI encodings can also be used as model probes: by comparing them to LM representations, we can ascertain what properties LMs attribute to mentioned entities, and predict when they will generate outputs that conflict with background knowledge or input text. REMEDI thus links work on probing, prompting, and model editing, and offers steps toward general tools for fine-grained inspection and control of knowledge in LMs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge