Marginal Contribution Feature Importance -- an Axiomatic Approach for The Natural Case

Paper and Code

Oct 15, 2020

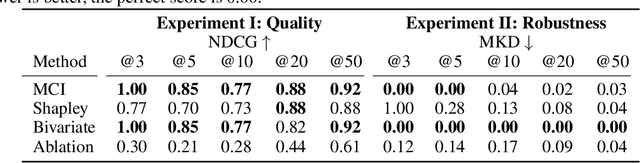

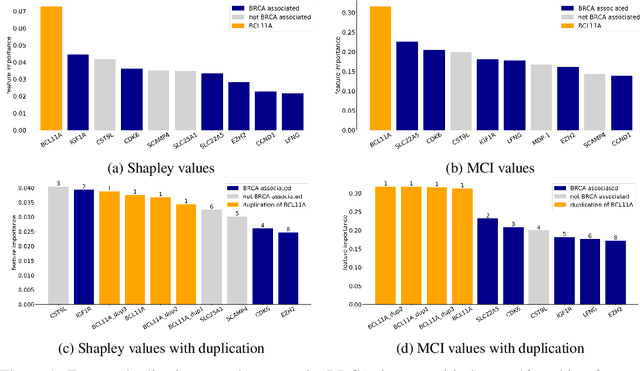

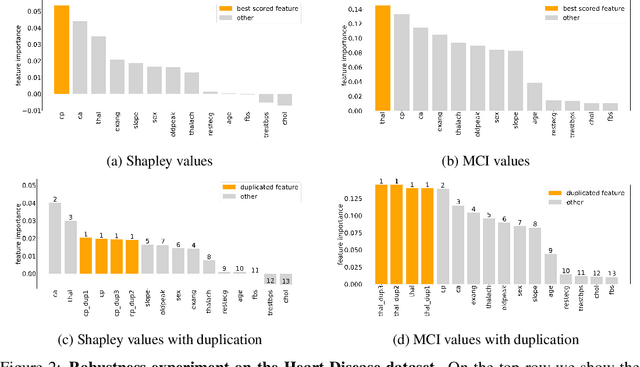

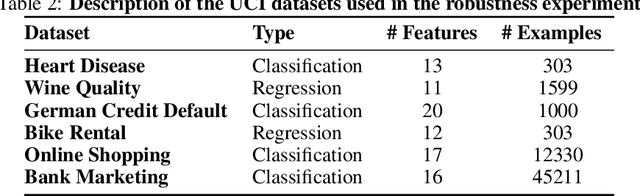

When training a predictive model over medical data, the goal is sometimes to gain insights about a certain disease. In such cases, it is common to use feature importance as a tool to highlight significant factors contributing to that disease. As there are many existing methods for computing feature importance scores, understanding their relative merits is not trivial. Further, the diversity of scenarios in which they are used lead to different expectations from the feature importance scores. While it is common to make the distinction between local scores that focus on individual predictions and global scores that look at the contribution of a feature to the model, another important division distinguishes model scenarios, in which the goal is to understand predictions of a given model from natural scenarios, in which the goal is to understand a phenomenon such as a disease. We develop a set of axioms that represent the properties expected from a feature importance function in the natural scenario and prove that there exists only one function that satisfies all of them, the Marginal Contribution Feature Importance (MCI). We analyze this function for its theoretical and empirical properties and compare it to other feature importance scores. While our focus is the natural scenario, we suggest that our axiomatic approach could be carried out in other scenarios too.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge