Map-based Visual-Inertial Localization: Consistency and Complexity

Paper and Code

Apr 26, 2022

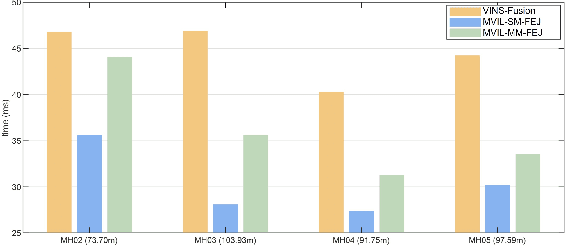

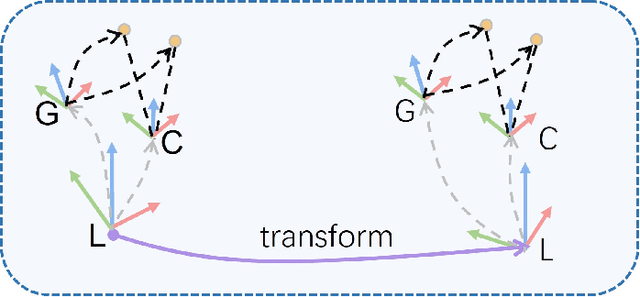

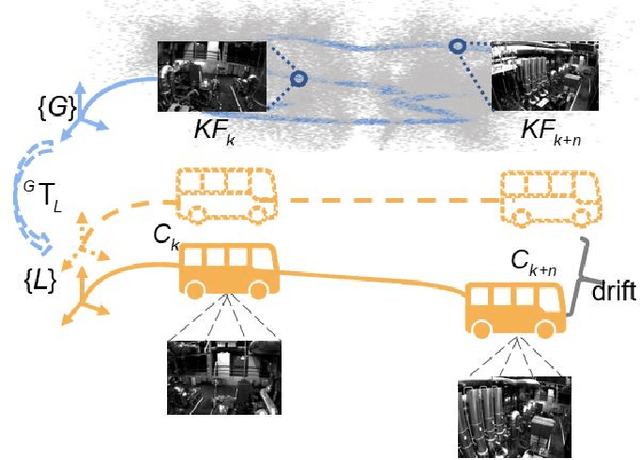

Drift-free localization is essential for autonomous vehicles. In this paper, we address the problem by proposing a filter-based framework, which integrates the visual-inertial odometry and the measurements of the features in the pre-built map. In this framework, the transformation between the odometry frame and the map frame is augmented into the state and estimated on the fly. Besides, we maintain only the keyframe poses in the map and employ Schmidt extended Kalman filter to update the state partially, so that the uncertainty of the map information can be consistently considered with low computational cost. Moreover, we theoretically demonstrate that the ever-changing linearization points of the estimated state can introduce spurious information to the augmented system and make the original four-dimensional unobservable subspace vanish, leading to inconsistent estimation in practice. To relieve this problem, we employ first-estimate Jacobian (FEJ) to maintain the correct observability properties of the augmented system. Furthermore, we introduce an observability-constrained updating method to compensate for the significant accumulated error after the long-term absence (can be 3 minutes and 1 km) of map-based measurements. Through simulations, the consistent estimation of our proposed algorithm is validated. Through real-world experiments, we demonstrate that our proposed algorithm runs successfully on four kinds of datasets with the lower computational cost (20% time-saving) and the better estimation accuracy (45% trajectory error reduction) compared with the baseline algorithm VINS-Fusion, whereas VINS-Fusion fails to give bounded localization performance on three of four datasets because of its inconsistent estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge