Manifold Learning Benefits GANs

Paper and Code

Dec 23, 2021

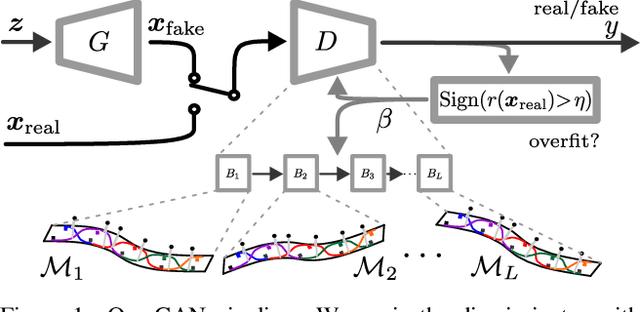

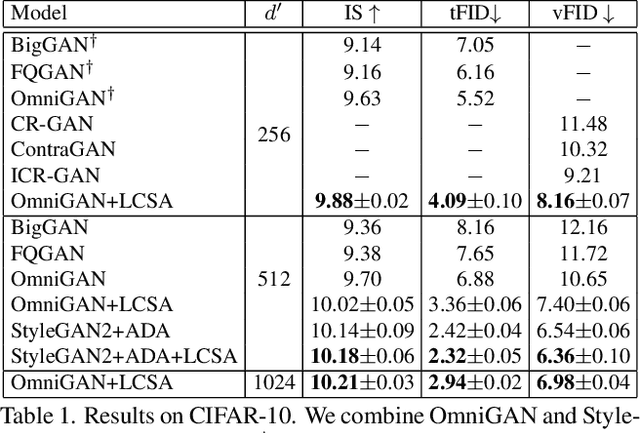

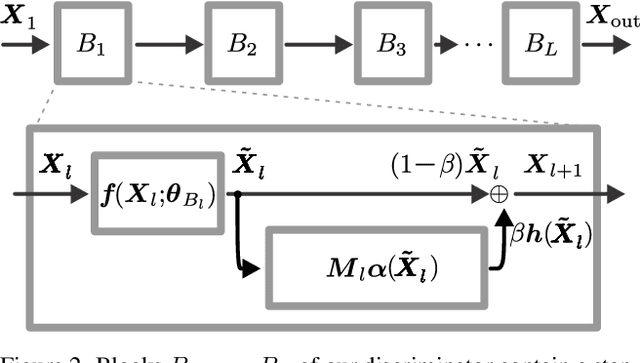

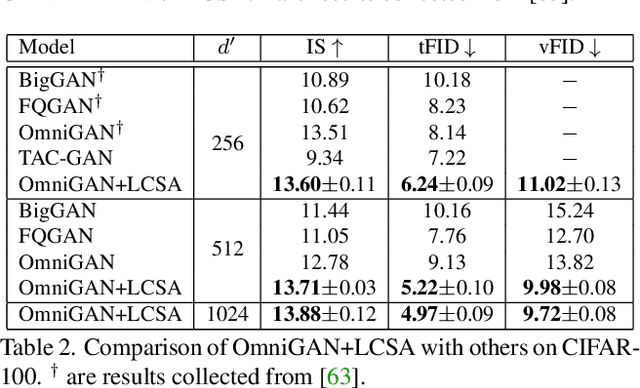

In this paper, we improve Generative Adversarial Networks by incorporating a manifold learning step into the discriminator. We consider locality-constrained linear and subspace-based manifolds, and locality-constrained non-linear manifolds. In our design, the manifold learning and coding steps are intertwined with layers of the discriminator, with the goal of attracting intermediate feature representations onto manifolds. We adaptively balance the discrepancy between feature representations and their manifold view, which represents a trade-off between denoising on the manifold and refining the manifold. We conclude that locality-constrained non-linear manifolds have the upper hand over linear manifolds due to their non-uniform density and smoothness. We show substantial improvements over different recent state-of-the-art baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge