MACCIF-TDNN: Multi aspect aggregation of channel and context interdependence features in TDNN-based speaker verification

Paper and Code

Jul 07, 2021

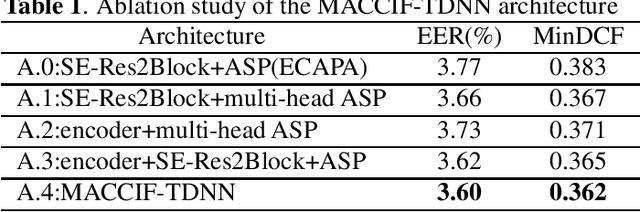

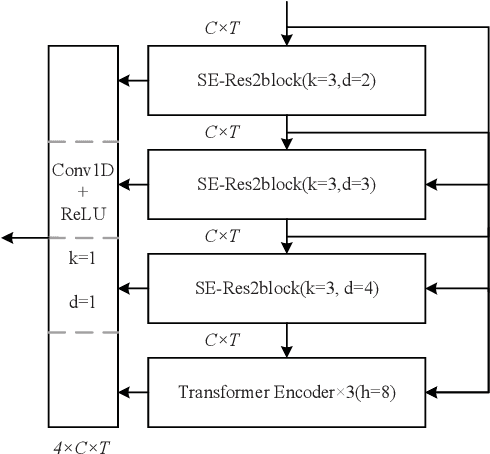

Most of the recent state-of-the-art results for speaker verification are achieved by X-vector and its subsequent variants. In this paper, we propose a new network architecture which aggregates the channel and context interdependence features from multi aspect based on Time Delay Neural Network (TDNN). Firstly, we use the SE-Res2Blocks as in ECAPA-TDNN to explicitly model the channel interdependence to realize adaptive calibration of channel features, and process local context features in a multi-scale way at a more granular level compared with conventional TDNN-based methods. Secondly, we explore to use the encoder structure of Transformer to model the global context interdependence features at an utterance level which can capture better long term temporal characteristics. Before the pooling layer, we aggregate the outputs of SE-Res2Blocks and Transformer encoder to leverage the complementary channel and context interdependence features learned by themself respectively. Finally, instead of performing a single attentive statistics pooling, we also find it beneficial to extend the pooling method in a multi-head way which can discriminate features from multiple aspect. The proposed MACCIF-TDNN architecture can outperform most of the state-of-the-art TDNN-based systems on VoxCeleb1 test sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge