LW-GCN: A Lightweight FPGA-based Graph Convolutional Network Accelerator

Paper and Code

Nov 04, 2021

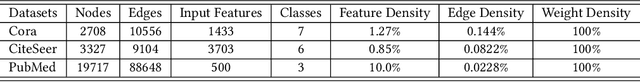

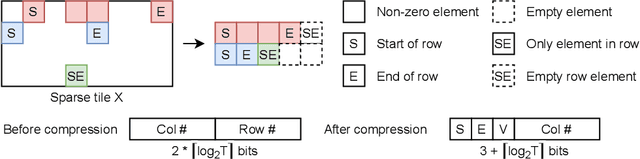

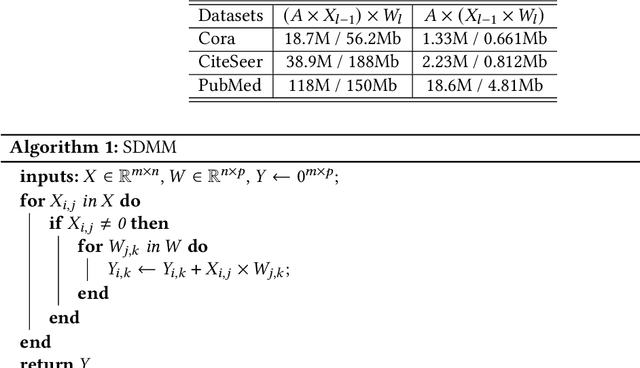

Graph convolutional networks (GCNs) have been introduced to effectively process non-euclidean graph data. However, GCNs incur large amounts of irregularity in computation and memory access, which prevents efficient use of traditional neural network accelerators. Moreover, existing dedicated GCN accelerators demand high memory volumes and are difficult to implement onto resource limited edge devices. In this work, we propose LW-GCN, a lightweight FPGA-based accelerator with a software-hardware co-designed process to tackle irregularity in computation and memory access in GCN inference. LW-GCN decomposes the main GCN operations into sparse-dense matrix multiplication (SDMM) and dense matrix multiplication (DMM). We propose a novel compression format to balance workload across PEs and prevent data hazards. Moreover, we apply data quantization and workload tiling, and map both SDMM and DMM of GCN inference onto a uniform architecture on resource limited hardware. Evaluation on GCN and GraphSAGE are performed on Xilinx Kintex-7 FPGA with three popular datasets. Compared to existing CPU, GPU, and state-of-the-art FPGA-based accelerator, LW-GCN reduces latency by up to 60x, 12x and 1.7x and increases power efficiency by up to 912x., 511x and 3.87x, respectively. Furthermore, compared with NVIDIA's latest edge GPU Jetson Xavier NX, LW-GCN achieves speedup and energy savings of 32x and 84x, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge