Look ATME: The Discriminator Mean Entropy Needs Attention

Paper and Code

Apr 18, 2023

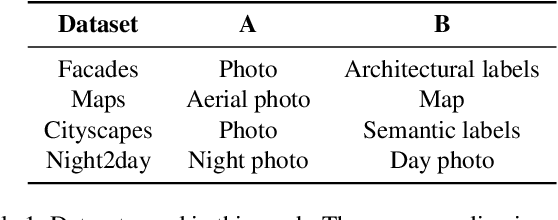

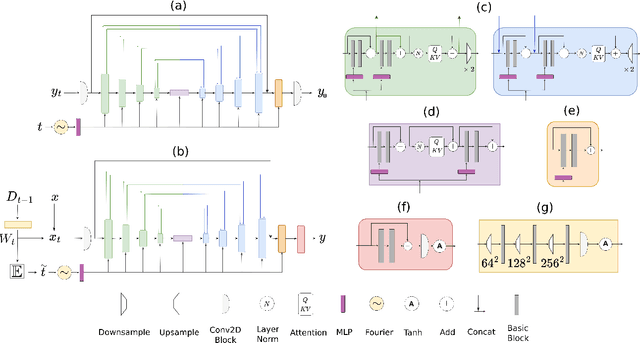

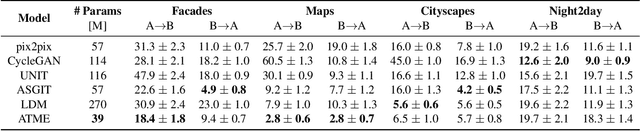

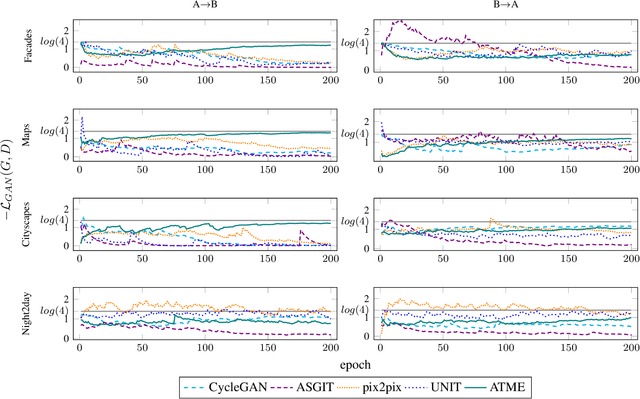

Generative adversarial networks (GANs) are successfully used for image synthesis but are known to face instability during training. In contrast, probabilistic diffusion models (DMs) are stable and generate high-quality images, at the cost of an expensive sampling procedure. In this paper, we introduce a simple method to allow GANs to stably converge to their theoretical optimum, while bringing in the denoising machinery from DMs. These models are combined into a simpler model (ATME) that only requires a forward pass during inference, making predictions cheaper and more accurate than DMs and popular GANs. ATME breaks an information asymmetry existing in most GAN models in which the discriminator has spatial knowledge of where the generator is failing. To restore the information symmetry, the generator is endowed with knowledge of the entropic state of the discriminator, which is leveraged to allow the adversarial game to converge towards equilibrium. We demonstrate the power of our method in several image-to-image translation tasks, showing superior performance than state-of-the-art methods at a lesser cost. Code is available at https://github.com/DLR-MI/atme

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge