Long-term Planning by Short-term Prediction

Paper and Code

Feb 04, 2016

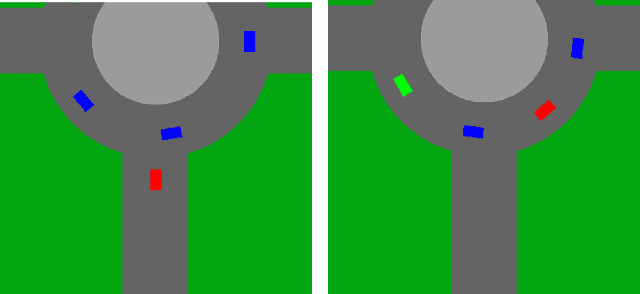

We consider planning problems, that often arise in autonomous driving applications, in which an agent should decide on immediate actions so as to optimize a long term objective. For example, when a car tries to merge in a roundabout it should decide on an immediate acceleration/braking command, while the long term effect of the command is the success/failure of the merge. Such problems are characterized by continuous state and action spaces, and by interaction with multiple agents, whose behavior can be adversarial. We argue that dual versions of the MDP framework (that depend on the value function and the $Q$ function) are problematic for autonomous driving applications due to the non Markovian of the natural state space representation, and due to the continuous state and action spaces. We propose to tackle the planning task by decomposing the problem into two phases: First, we apply supervised learning for predicting the near future based on the present. We require that the predictor will be differentiable with respect to the representation of the present. Second, we model a full trajectory of the agent using a recurrent neural network, where unexplained factors are modeled as (additive) input nodes. This allows us to solve the long-term planning problem using supervised learning techniques and direct optimization over the recurrent neural network. Our approach enables us to learn robust policies by incorporating adversarial elements to the environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge