LoByITFL: Low Communication Secure and Private Federated Learning

Paper and Code

May 29, 2024

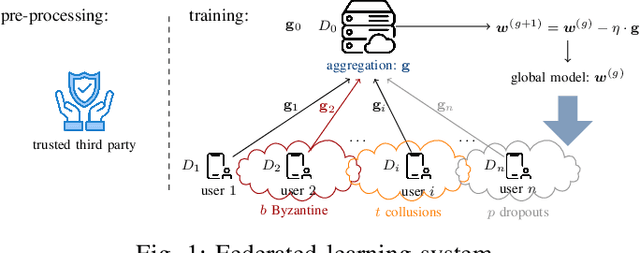

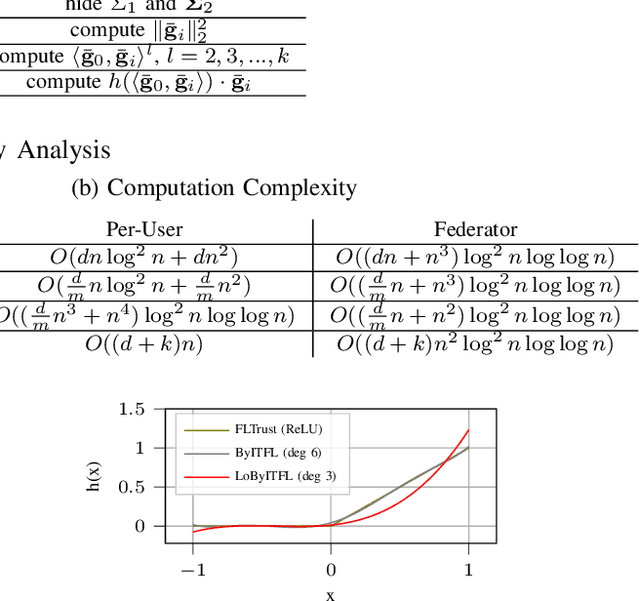

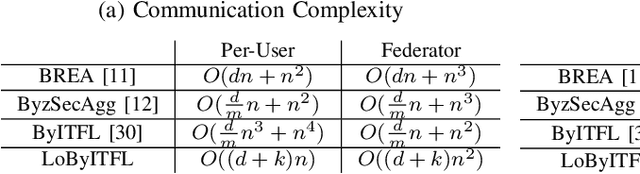

Federated Learning (FL) faces several challenges, such as the privacy of the clients data and security against Byzantine clients. Existing works treating privacy and security jointly make sacrifices on the privacy guarantee. In this work, we introduce LoByITFL, the first communication-efficient Information-Theoretic (IT) private and secure FL scheme that makes no sacrifices on the privacy guarantees while ensuring security against Byzantine adversaries. The key ingredients are a small and representative dataset available to the federator, a careful transformation of the FLTrust algorithm and the use of a trusted third party only in a one-time preprocessing phase before the start of the learning algorithm. We provide theoretical guarantees on privacy and Byzantine-resilience, and provide convergence guarantee and experimental results validating our theoretical findings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge